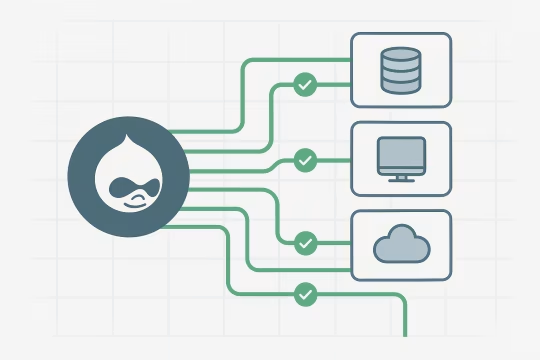

This LSHTM Drupal platform performance optimization case study covers how a complex Drupal-based research data platform for LSHTM (London School of Hygiene & Tropical Medicine) was stabilized and optimized to support reliable data publishing, improved performance, and scalable integrations with external data providers.

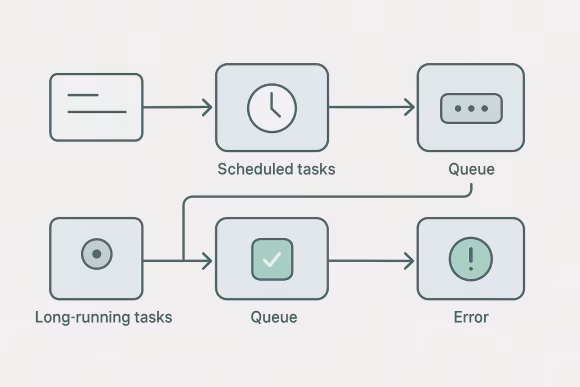

The work was focused on improving platform responsiveness and reducing operational risks caused by performance degradation and unreliable synchronization processes. Multiple data pipelines were stabilized, including scheduled exports/imports and distribution of research data to third-party systems and downstream consumers.

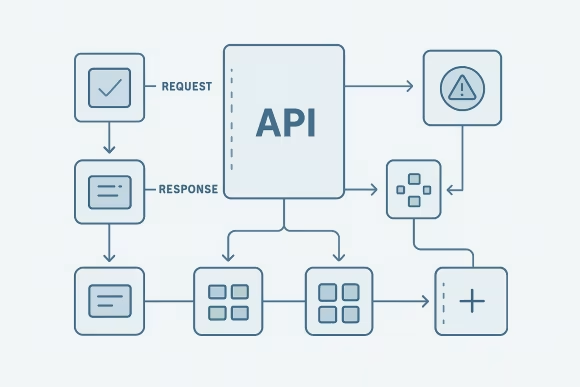

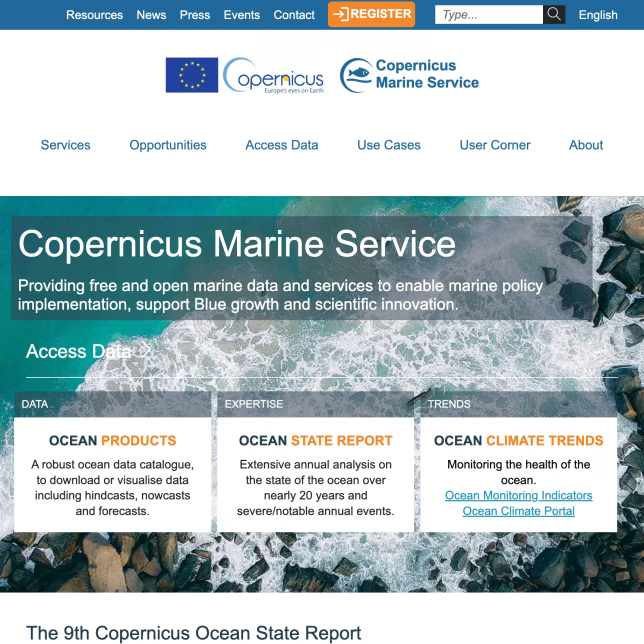

A robust integration layer was strengthened to support data exchange via APIs, FTP, Email delivery, and cloud storage providers (Google Drive and Microsoft cloud disks). The platform was enhanced to process and distribute structured and semi-structured content in XML, HTML, CSV, PDF, and Plain TXT formats while preserving data integrity, auditability, and performance.

[01]

[01]