Fragmented Customer Data Prevents Reliable Activation

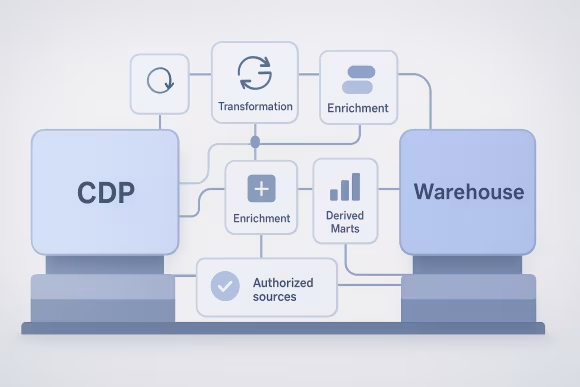

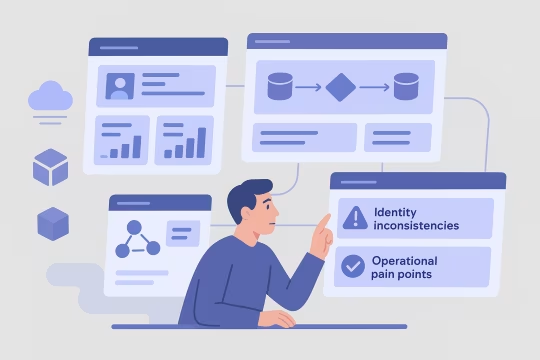

As customer platforms grow, data arrives from web, mobile, CRM, commerce, and support systems with different identifiers, inconsistent event naming, and varying levels of consent metadata. Teams often implement point-to-point mappings into a CDP, while parallel pipelines feed a warehouse for reporting. Over time, the same “customer” concept diverges across tools, and the platform accumulates multiple competing definitions of profiles, sessions, and lifecycle states.

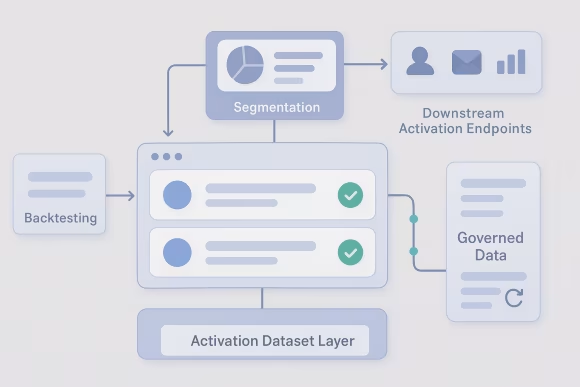

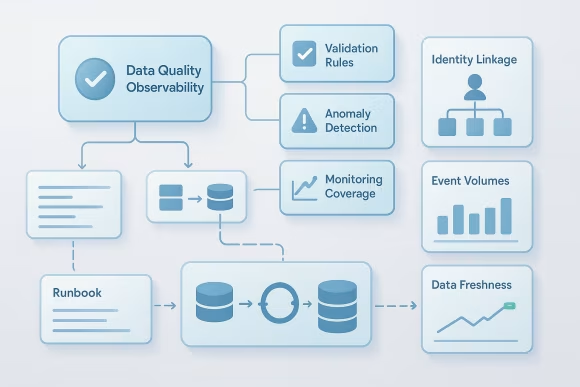

This fragmentation creates architectural drag. Identity stitching logic gets embedded in ingestion jobs, audience builders, and BI layers, making it difficult to reason about lineage and correctness. Event schemas drift as product teams ship changes without shared contracts, leading to brittle transformations and frequent backfills. When the CDP becomes the only place where certain joins or enrichments exist, portability and vendor flexibility decrease.

Operationally, the impact shows up as inconsistent metrics between analytics and activation, slow onboarding of new sources, and high effort to troubleshoot data quality incidents. Governance becomes reactive: privacy and retention controls are applied inconsistently, and access patterns are hard to audit. The platform spends more time reconciling data than enabling new customer experiences.

[01]

[01]