Products evolve faster than most tracking plans.

A team launches a new checkout flow. Marketing introduces a new lifecycle audience. Data engineering standardizes identifiers across channels. Privacy requirements change what can be collected, where, and under what conditions. Each decision can alter the shape of an event, the meaning of a property, or the timing of when data arrives.

That is why CDP event schema versioning matters. It is not just a developer concern. In enterprise environments, event changes can quietly affect reporting, journey orchestration, lead scoring, attribution, audience qualification, and the stability of activation pipelines. A field renamed in web tracking can become a null attribute in the warehouse. A redefined enum can split a segment. A duplicated event can inflate conversion metrics for weeks before anyone notices.

Schema changes should not break activationRun Health CheckThe goal is not to freeze schemas forever. It is to let them evolve without creating downstream ambiguity. That requires clear contracts, explicit compatibility rules, coordinated rollout plans, and governance that treats event changes as business-impacting changes, not just implementation details.

Why event schemas drift as products and channels evolve

Schema drift is normal. It usually reflects product and business change rather than poor intent.

Common drivers include:

- new user journeys, steps, and states in digital products

- expansion into new platforms such as mobile apps, kiosks, or partner channels

- changes to identity strategy, including anonymous-to-known stitching

- revised consent controls and regional data handling requirements

- new activation use cases that need additional properties or more granular events

- analytics model redesigns that require more consistent naming or classification

- mergers of previously separate tracking implementations into one enterprise model

In practice, drift often starts small. A team adds a property for a new campaign use case. Another team renames an event to align with product terminology. A third team changes a value list because the UI labels changed. None of these decisions may look risky in isolation.

The problem is cumulative inconsistency.

Without a versioning discipline, event producers and event consumers stop sharing the same understanding of the data. Collection code, validation rules, transformation logic, warehouse models, dashboards, segments, and activation tools can all begin to operate on slightly different assumptions. The result is not always a hard failure. More often, it is silent degradation.

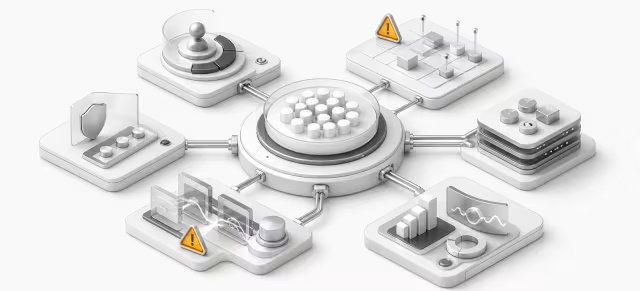

Failure modes: broken segments, null attributes, duplicate events, metric discontinuity

The most expensive schema problems are rarely syntax errors. They are semantic breaks that appear downstream after the event has already been accepted.

Common failure modes include:

- Broken segments: A segment depends on

plan_tier = enterprise, but the enum changes toentorenterprise_paid. Audience size drops unexpectedly. - Null attributes: A required property becomes optional in one channel, but downstream activation logic still assumes it is always populated.

- Duplicate events: During migration, both old and new event names fire for the same action, inflating funnels and conversion metrics.

- Metric discontinuity: A property changes meaning over time, so the same dashboard metric spans incompatible definitions before and after release.

- Warehouse model breaks: Transformations or tests reference fields that no longer exist or now carry different types.

- Activation mismatches: A campaign relies on an event arriving within a certain window, but a pipeline change delays or suppresses delivery.

- Attribution distortion: Channel or source properties are redefined without back-compatibility, causing reporting fragmentation.

These issues matter because CDP data is operational, not just analytical. When schemas shift carelessly, teams do not only lose clean reporting. They can also misroute journeys, suppress the wrong users, qualify audiences incorrectly, or trigger experiences based on incomplete data.

That is why versioning should be tied to business reliability. The question is not simply, "Did the event still send?" It is, "Did every downstream use case still behave as intended?"

Pressure-test your event model before segments drift

Assess schema compatibility, rollout risk, and downstream activation impact before version changes create broken audiences or duplicate triggers.

- Check event contract gaps

- Spot activation break risks

- Validate rollout readiness

Contract design: required fields, optional fields, enum control, naming rules

Effective event contract management starts before version numbers appear. A weak contract makes versioning hard because nothing is clear enough to preserve.

A practical event contract should define at least:

- event name and business meaning

- trigger condition and timing

- required properties

- optional properties

- data types and allowed formats

- enum values and their definitions

- identity fields and precedence rules

- channel-specific notes, if the same event is emitted from multiple platforms

- ownership and approvers

- downstream dependencies, such as key dashboards or activation audiences

Some controls matter especially for long-term compatibility.

Required vs optional fields should be explicit. If everything is treated as optional, consumers cannot reliably depend on anything. If too many fields are required, every product change becomes harder to release. A useful pattern is to keep the required core small and stable, then allow optional enrichment around it.

Enum control deserves more discipline than it often gets. Free-form strings are easy to emit but hard to govern. Controlled enums reduce ambiguity, but only if value additions and changes are managed carefully. A changed enum is often a breaking change for segmentation, rules engines, and dashboard logic, even when the field name remains the same.

Naming rules should aim for consistency over cleverness. Teams often benefit from conventions such as:

- stable, business-readable event names

- consistent verb-object structure where appropriate

- property names that describe durable meaning rather than UI labels

- avoidance of synonyms for the same concept across teams

- clear differentiation between raw values and normalized values

The more precisely a contract defines meaning, the easier it becomes to decide whether a proposed change is additive, compatible, or breaking.

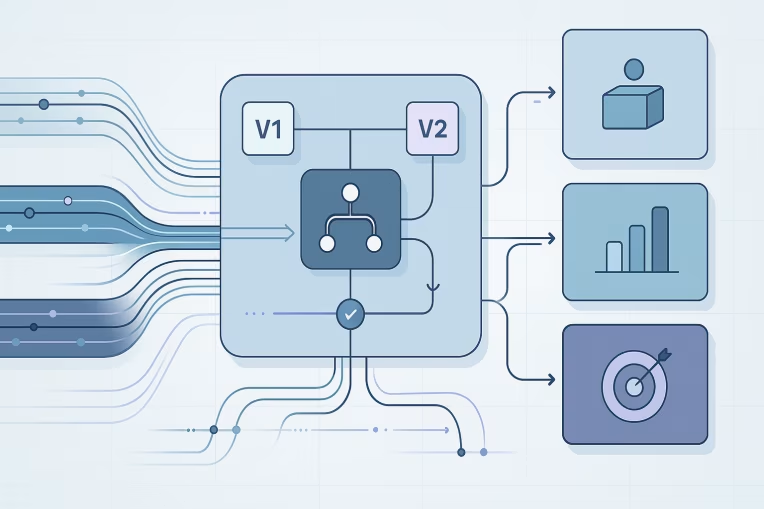

Versioning patterns: additive changes, deprecations, hard breaks, translation layers

Not every schema change deserves the same response. One of the most important practices in event schema evolution is distinguishing between additive changes and breaking changes.

Additive changes

Additive changes usually preserve compatibility for existing consumers. Examples include:

- adding a new optional property

- adding a new event that does not replace an existing one

- expanding a schema with additional enrichment that downstream systems can safely ignore

Additive does not mean risk-free. A new property can still affect downstream costs, model complexity, or activation logic if teams begin depending on it immediately. But in general, additive changes are easier to release safely.

Deprecations

Deprecation is the controlled retirement of something that still exists temporarily. Examples include:

- marking a property as deprecated while still emitting it

- announcing that an event will be replaced by a newer event after a transition period

- maintaining old enum values while steering new producers to updated values

Deprecation is useful because it gives consumers time to migrate. It also creates a formal window for documenting impact, testing alternatives, and updating dependent assets.

Hard breaks

A hard break changes the contract in a way that can invalidate existing consumers. Examples include:

- renaming or removing a field that downstream models reference

- changing a property type from string to array or integer

- redefining an event's business meaning while keeping the same event name

- changing enum values in a way that causes existing filters to fail

- altering event timing so that the same event now fires at a different lifecycle point

Hard breaks should be treated as coordinated change programs, not quick implementation updates.

Translation layers

When direct compatibility is difficult, translation layers can reduce risk. These can exist in validation, transformation, or warehouse modeling layers.

Examples include:

- mapping legacy property names to a canonical schema

- normalizing old and new enum values into one consistent downstream value set

- deriving a stable warehouse-facing contract while raw collection evolves

- maintaining a compatibility view or model for existing reports and audiences during migration

Translation layers are often valuable in enterprise platforms because they let collection evolve while protecting downstream consumers. The tradeoff is complexity. If translation persists indefinitely, the platform accumulates semantic debt. Use it to manage transition, not to avoid standardization forever.

Rollout sequencing across web tracking, pipelines, warehouse, and activation tools

Many schema failures happen because teams sequence changes in the wrong order.

A safe rollout usually spans several layers:

-

Contract definition

- Document the proposed change.

- classify it as additive, deprecated, or breaking

- identify affected producers and consumers

- define validation and success criteria

-

Consumer impact assessment

- review dashboards, models, segments, audiences, and activation workflows that depend on the event

- identify where nulls, value changes, or timing changes could create business impact

- agree on migration requirements and timing

-

Pipeline readiness

- update schema registries, validation rules, transformation logic, and warehouse ingestion expectations

- add support for both old and new forms where a transition period is needed

- prepare tests for type, presence, cardinality, and allowed values

-

Producer implementation

- release collection changes in web, app, server, or partner integrations

- confirm that instrumentation follows the approved contract rather than ad hoc implementation choices

- use feature flags or controlled release patterns where possible

-

Dual-run or compatibility period

- where appropriate, allow old and new representations to coexist temporarily

- monitor duplicate risk carefully

- validate that downstream systems are receiving and interpreting the new shape correctly

-

Consumer migration

- update warehouse models, semantic layers, dashboards, segments, and activation logic

- confirm that reporting continuity is preserved or clearly annotated

- retire references to deprecated fields or events

-

Decommissioning

- remove translation logic, legacy fields, or old event names after migration is complete

- update documentation to reflect the current authoritative contract

This sequencing matters because collection is only the first step in the data lifecycle. A schema that validates at the edge can still fail operationally in transformation, identity resolution, segmentation, or orchestration.

In enterprise environments, it is often wise to treat schema changes much like API changes: proposed, reviewed, tested, released, monitored, and then formally closed. That kind of sequencing is usually easier to sustain when teams have a defined CDP platform architecture rather than a loose set of disconnected tools and owners.

Governance model: ownership, approval, documentation, and change windows

Strong CDP tracking governance does not need to be bureaucratic, but it does need to be explicit.

At minimum, each event domain should have:

- a business owner responsible for meaning and usage

- a technical owner responsible for implementation quality and compatibility

- a documented approval path for breaking changes

- a maintained tracking plan or contract repository

- a defined deprecation process

- agreed change windows for high-impact updates

This matters because event contracts sit between multiple teams. Product teams may optimize for speed. Analytics teams may optimize for consistency. Marketing teams may optimize for audience continuity. Data platform teams may optimize for maintainability and observability. Without governance, these priorities collide late, often after release.

A practical governance model often includes:

- Change classification: low-risk additive updates versus high-risk breaking changes

- Approval thresholds: who must sign off depending on downstream impact

- Documentation standards: event purpose, schema, lineage, owners, and dependencies

- Release discipline: scheduled windows for changes affecting core funnel or activation events

- Migration policy: required overlap periods, communication expectations, and retirement criteria

- Exception handling: what to do when urgent production fixes bypass normal review

Governance is not just about stopping bad changes. It is about making good changes routine. Teams move faster when they know how changes are proposed, reviewed, tested, and released. In practice, this often depends on a formal event tracking architecture that defines ownership, contract standards, and deprecation workflows across teams.

What to monitor after a schema change

A versioned schema is only safe if post-release monitoring confirms reality matches intent.

For activation pipeline stability, monitor across multiple layers:

Collection and validation

- event volume by source and version

- property presence rates for required fields

- type validation failures

- unexpected enum values

- sudden drops in key identity fields

Transformation and warehouse

- ingestion latency

- schema mismatch errors

- null-rate changes in modeled fields

- failed jobs or test failures in downstream models

- unexpected cardinality shifts

Analytics and activation

- audience size changes for critical segments

- conversion trend discontinuities around release time

- trigger volumes for journeys or campaigns

- suppression list changes

- attribution dimension fragmentation

Operational signals

- duplicate firing rates

- changes in anonymous-to-known join behavior

- channel-by-channel consistency for supposedly common events

- backlog or exception growth in data quality workflows

It is also useful to define a short hypercare period after meaningful changes. During that period, owners should actively review dashboards, audience counts, validation reports, and known dependent workflows. Many issues are easiest to correct when caught within the first release window. Where activation depends on strict downstream delivery behavior, a dedicated data activation architecture can make those contracts, latency expectations, and monitoring responsibilities much clearer.

A practical checklist for schema-safe change management

For teams managing tracking plan versioning in enterprise platforms, the following checklist can help keep change disciplined without becoming heavy.

Before the change

- define the business reason for the schema update

- classify the change as additive, deprecated, or breaking

- document the exact contract and affected fields, types, enums, and timing

- identify affected producers and downstream consumers

- assess impact on analytics, segmentation, attribution, and activation

- confirm ownership and approval

During implementation

- update validation rules and transformation logic before or alongside producer changes

- use feature flags, staged rollout, or controlled deployment when possible

- create compatibility logic where transition support is required

- test against representative downstream use cases, not only raw event delivery

- verify documentation is updated before release, not after

After release

- monitor data quality, null rates, volumes, enums, and latency

- inspect key dashboards and audience definitions for drift

- look for duplicate events or temporary double counting

- communicate migration deadlines and deprecation timing clearly

- remove legacy logic only after all critical consumers have migrated

- record lessons learned for future schema changes

The most important principle is simple: version the contract, not just the code. If only the implementation team knows what changed, the organization still carries risk.

Final thought

Schema evolution is unavoidable in modern CDP ecosystems. The real choice is whether that evolution happens intentionally or through drift.

The strongest teams treat event schemas as shared operational contracts. They distinguish additive changes from breaking ones. They sequence releases across collection, validation, transformation, warehouse, and activation. They establish governance that reflects real business impact. And they monitor outcomes after release rather than assuming a successful deploy means a successful change.

Done well, CDP event schema versioning gives the business room to evolve without sacrificing trust in analytics or activation.

Activation reliability

See where schema versioning can disrupt audiences and journeys

Use the Health Check to uncover contract weaknesses, migration risk, and monitoring gaps that can distort segmentation, attribution, and activation performance.

That trust is what keeps data useful when products, channels, and customer journeys keep changing.

Tags: CDP, CDP event schema versioning, event schema evolution, CDP tracking governance, analytics engineering, data activation architecture