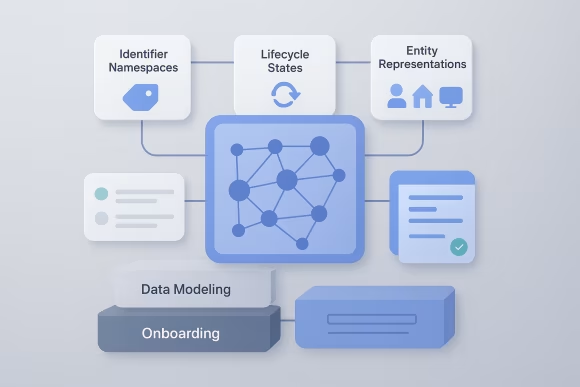

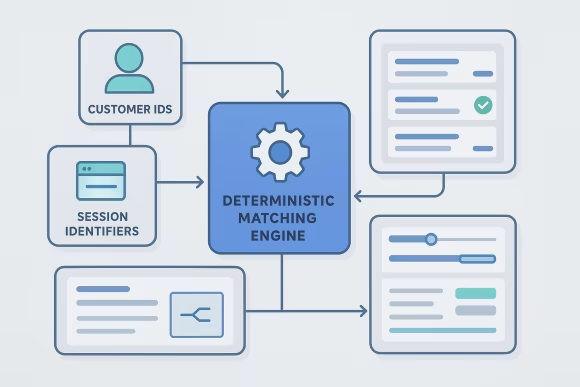

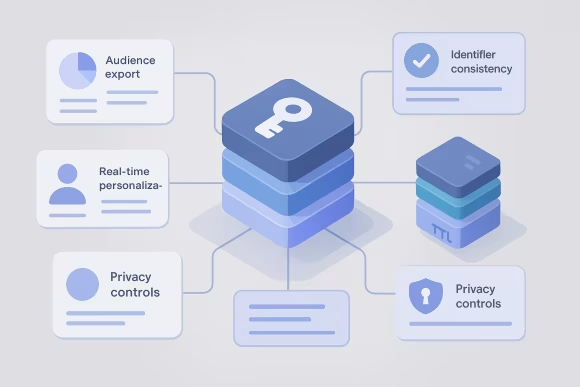

Customer identity graphs connect identifiers from multiple systems into a coherent representation of people, households, accounts, and devices. Customer identity graph architecture defines how identifiers are modeled, how links are created and updated, and how downstream systems consume resolved profiles. In CDP programs, this becomes the backbone for segmentation, measurement, personalization, and privacy controls.

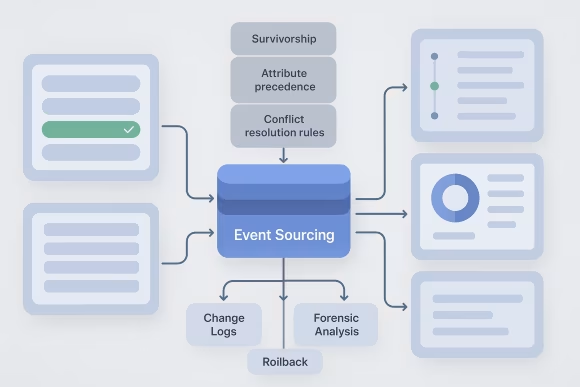

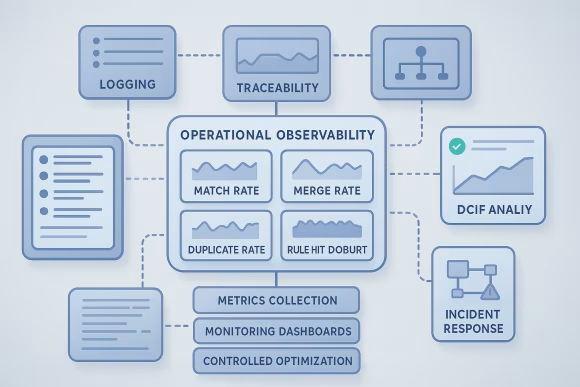

Organizations typically need cross-channel identity resolution architecture when data sources proliferate and identity rules become inconsistent across teams. Without a clear identity model and CDP identity resolution design, profile quality degrades, match rates become opaque, and activation pipelines drift from analytics truth. A well-defined approach establishes deterministic probabilistic identity matching, event-time vs batch reconciliation, survivorship rules, and confidence scoring.

At platform level, the work aligns data contracts, governance, and operational controls so identity can scale with new channels, regions, and consent regimes. The result is an extensible identity foundation that supports reliable integrations, auditable decisioning, and maintainable evolution of the CDP ecosystem.

[01]

[01]