[01]

[01]Discovery and Inventory

Collect current identity flows, source systems, and existing matching logic. Validate priority use cases and constraints, and establish baseline metrics such as duplicate rates and profile churn to measure impact.

An identity resolution strategy defines how a CDP and data platform create, match, merge, and maintain customer identities across channels, devices, and systems. In practice, it is the blueprint for cross-channel identity stitching and a unified customer profile identity architecture: which identifiers are authoritative, how relationships are represented (person, household, account), and when deterministic rules are sufficient versus when probabilistic signals are acceptable.

Organizations need this capability when data volumes grow, acquisition channels diversify, and multiple systems produce partial or conflicting identity signals. Without a clear strategy, teams implement ad-hoc stitching in downstream tools, producing inconsistent customer counts, unstable segments, and unreliable attribution.

A well-defined strategy supports scalable platform architecture by standardizing identity data contracts, match/merge logic, survivorship rules, and governance. It enables a maintainable identity graph that can be operationalized across CDP, analytics, and activation systems while respecting privacy constraints, consent, and regional compliance requirements.

As customer platforms expand, identity signals accumulate from web events, mobile apps, CRM records, email systems, and offline sources. Each system carries different identifiers and varying data quality, and teams often stitch identities locally to satisfy immediate reporting or activation needs. Over time, multiple competing definitions of “a customer” emerge, and profile counts fluctuate as rules change across tools.

This fragmentation creates architectural drift. Matching logic becomes embedded in ETL jobs, CDP configurations, and analytics queries with limited documentation or test coverage. When identifiers change (new login flows, cookie deprecation, CRM migrations), downstream breakage is hard to detect, and remediation requires coordinated changes across many pipelines. Data teams spend cycles reconciling duplicates, investigating unexpected merges, and explaining inconsistencies to stakeholders.

Operationally, unstable identity resolution increases risk: segments become unreliable, suppression lists fail, consent enforcement is inconsistent, and attribution models produce conflicting results. The platform becomes harder to govern because identity decisions are not auditable, and changes cannot be rolled out safely with clear impact analysis and rollback paths.

Inventory identity sources, identifiers, and current stitching logic across CDP, CRM, analytics, and activation tools. Map key use cases such as personalization, suppression, attribution, and reporting, and document constraints including consent, retention, and regional compliance.

Define the identity domain: person, account, household, device, and their relationships. Establish canonical entities and key attributes, and specify where each attribute is mastered, how it is updated, and how conflicts are resolved.

Classify identifiers by strength and scope (login ID, email, phone, device IDs, cookies, hashed identifiers). Define normalization rules, validation, and lifecycle handling such as rotation, re-verification, and deprecation.

Define a deterministic vs probabilistic identity matching strategy. Design deterministic rules and, where appropriate, probabilistic scoring inputs; specify thresholds, merge constraints, and exception handling (e.g., shared emails, family devices, B2B shared inboxes) to avoid over-merging and identity contamination.

Define precedence and survivorship for conflicting attributes, including source ranking, recency, and confidence. Specify how to handle nulls, partial updates, and attribute-level lineage to support auditability and downstream trust.

Establish privacy-aware identity resolution governance and change management for identity rules, including versioning, approvals, and impact assessment. Define monitoring for duplicates, merge rates, and anomalous spikes, and document runbooks for incident response and rollback.

Translate the strategy into implementable patterns for the target CDP and data platform. Define data contracts, pipeline responsibilities, testing requirements, and a phased rollout plan aligned to priority use cases and activation dependencies.

This service establishes the technical foundations required for CDP identity resolution design across an enterprise data ecosystem. It focuses on explicit modeling of identity entities and relationships, formalized deterministic and probabilistic identity matching strategy, and governance mechanisms that make identity decisions testable and auditable. The result is an identity layer that can evolve with new channels, privacy constraints, and platform migrations without destabilizing Customer 360 profiles or downstream activation.

Engagements are structured to produce implementable architecture and governance artifacts, not slideware. We work from current-state evidence, define decision points and trade-offs, and deliver specifications that engineering teams can implement and operate across CDP and data platforms.

[01]

[01]Collect current identity flows, source systems, and existing matching logic. Validate priority use cases and constraints, and establish baseline metrics such as duplicate rates and profile churn to measure impact.

[02]

[02]Analyze data quality, identifier coverage, and failure modes such as over-merging and under-linking. Document architectural hotspots where identity logic is duplicated across pipelines or tools.

[03]

[03]Define the identity domain model, identifier taxonomy, and the target operating model for where identity resolution runs. Specify data contracts, ownership boundaries, and how downstream systems consume identity outputs.

[04]

[04]Write deterministic and probabilistic matching specifications, merge constraints, and survivorship rules. Define exception handling, unmerge policies, and audit requirements to support regulated environments.

[05]

[05]Map identity outputs to CDP profiles, analytics identifiers, and activation destinations. Define how consent, suppression, and preference signals are enforced across channels and how identity versions are propagated.

[06]

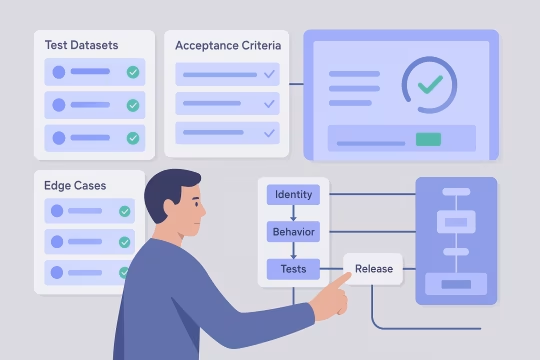

[06]Define test datasets, edge cases, and acceptance criteria for identity behavior. Establish monitoring requirements and release gates to detect regressions when sources or rules change.

[07]

[07]Deliver runbooks, change control workflows, and a versioning approach for identity rules. Align stakeholders on decision rights and provide implementation backlog items with clear priorities and dependencies.

A clear identity resolution strategy reduces platform ambiguity and makes Customer 360 outputs stable enough for operational use. It improves reliability of segmentation and measurement while lowering the cost of change when identifiers, channels, or regulations evolve.

Profiles become consistent across reporting and activation because identity rules are explicit and shared. Stakeholders see stable customer counts and fewer unexplained shifts after source or pipeline changes.

Normalization and deterministic matching reduce fragmentation caused by inconsistent identifiers. Lower duplication improves audience sizing, frequency capping, and downstream cost efficiency in activation platforms.

Governed merge constraints and unmerge policies reduce the chance of identity contamination. Monitoring and rollback paths make changes safer when onboarding new sources or updating matching logic.

Data contracts and identifier taxonomy provide a repeatable pattern for integrating new systems. Engineering teams spend less time reverse-engineering identity assumptions and more time implementing predictable pipelines.

Identity relationships are designed with consent and preference propagation in mind. This reduces the risk of activating profiles without appropriate permissions and supports regional compliance requirements.

Stable identity stitching improves continuity across sessions and channels, reducing noise in attribution and measurement. Analysts can interpret changes as real behavior shifts rather than identity artifacts.

Versioned rules, decision rights, and runbooks turn identity resolution into an operational capability. Teams can evolve the identity layer without reworking every downstream integration.

Adjacent capabilities that extend identity strategy into implementable architecture, data modeling, and operational CDP foundations.

Enterprise CRM data synchronization and identity mapping

Event-driven journeys across channels and products

CDP audience activation with governed delivery to channels

Audience sync activation engineering for CDP activation

CDP real-time decisioning design for real-time experiences

Customer analytics platform implementation for governed metrics and behavioral analytics

Unified customer profile architecture and insight-ready datasets

Scalable enterprise audience segmentation models and cohort definition frameworks

Consistent experiment tracking, metrics, and attribution

CDP event pipeline architecture and identity foundations

Unified customer profile design across identities and events

Customer profile and event schema engineering

Common architecture, operations, integration, governance, risk, and engagement questions for identity resolution strategy work.

We start by separating business semantics from implementation constraints. The identity domain model typically includes entities such as person, account, household, device, and sometimes location or subscription, plus the relationships between them. We define cardinality (one-to-many, many-to-many), relationship direction, and which edges are allowed to drive merges versus only provide context. Next, we map each entity and relationship to concrete data sources and identifiers. For example, a person may be anchored by a verified login ID, while a device relationship may be derived from app instance IDs. We also define which attributes are mastered where (CRM, support, ecommerce) and how updates propagate. Finally, we validate the model against priority use cases: segmentation, suppression, personalization, and measurement. If the model cannot support a use case without unsafe assumptions (e.g., household-based suppression in a shared email scenario), we adjust the model or constrain the use case. The output is a reference model that becomes the basis for data contracts and implementation patterns.

The right placement depends on latency requirements, governance needs, and how many downstream systems must consume identity outputs. Running identity resolution in the warehouse (or a dedicated identity service) often provides stronger versioning, testability, and auditability because the logic is expressed as code and can be validated with controlled datasets. It also makes identity outputs reusable across analytics and multiple activation tools. Running identity resolution in the CDP can be appropriate when the CDP is the primary system of record for profiles and you need near-real-time stitching for personalization. The trade-off is that CDP-native rule configuration can be harder to version, test, and reproduce outside the platform. In many enterprises, a hybrid approach works best: foundational identity entities and stable identifiers are resolved upstream (warehouse/lakehouse), while the CDP performs limited, well-governed stitching for real-time events. We document the boundary explicitly: which merges are authoritative, how identity versions are published, and how to prevent conflicting logic across layers.

We recommend monitoring metrics that detect both quality drift and unsafe behavior. Core metrics include duplicate rate (multiple profiles representing the same person), merge rate (how often identities are combined), unmerge rate (how often merges are reversed), and profile churn (how frequently a profile’s identifiers or key attributes change). Sudden spikes often indicate a source change, a parsing issue, or an overly permissive rule. Coverage metrics are equally important: percentage of events linked to a known identity, percentage of profiles with verified identifiers, and match yield by source. These show whether identity resolution is improving or degrading as new channels are added. Finally, add guardrails tied to risk: rate of merges driven by weak identifiers, number of profiles exceeding expected identifier counts, and consent propagation failures. We define thresholds, alerting, and investigation runbooks so teams can respond quickly. The goal is to treat identity as an operational system with observable behavior, not a static configuration.

We treat identity rules as versioned artifacts with a controlled release process. Changes start with an impact assessment: which identifiers and sources are affected, expected changes to merge behavior, and which downstream systems consume the identity outputs. We then run the new rules against a representative dataset to compare metrics such as duplicates, merges, and segment membership deltas. For rollout, we typically recommend a phased approach. First, publish the new identity version in parallel (shadow mode) while keeping the current version as the operational default. This allows validation with real traffic and real downstream queries without changing production behavior. Once validated, we coordinate a cutover window and communicate expected changes to stakeholders. We also define rollback criteria and procedures, including how to handle profiles created or merged during the transition. This approach reduces surprises in dashboards, attribution, and campaign audiences while still allowing the identity layer to evolve.

Onboarding starts with a source assessment focused on identifiers, data quality, and update behavior. We identify which identifiers the source provides, whether they are verified, and how stable they are over time. We also evaluate how the source represents entities (person vs account) and whether it introduces new relationships that must be modeled. Next, we define a data contract: required fields, normalization rules, validation checks, and how the source should publish changes (full snapshots vs incremental updates). We map the source into the identity domain model and specify which edges it can create. For example, a support system may contribute verified email and account relationships but should not drive merges based on free-text fields. Finally, we validate match yield and risk using test datasets and monitoring during an initial release. The goal is to integrate the source predictably, without silently changing the meaning of “customer” for downstream teams.

We start by defining which identifiers are allowed for activation (e.g., hashed email, phone, platform-specific IDs) and the consent requirements for each destination. We then map identity entities to activation needs: some channels require person-level identifiers, while others operate at account or household level. Next, we specify export rules and suppression logic. This includes how to prevent sending identities that are unverified, recently changed, or outside retention windows, and how to ensure opt-outs propagate across all linked identities. We also define how identity versions are represented so that activation systems can handle changes without duplicating audiences. Finally, we address operational concerns: rate limits, incremental exports, and reconciliation. We recommend maintaining an auditable record of what identifiers were exported, when, and under which consent state. This supports compliance inquiries and helps debug discrepancies between CDP audiences and destination platform counts.

Ownership should be split between decision rights and implementation responsibility. Typically, a data governance or Customer 360 steering group owns the definition of identity semantics: what constitutes a person, which identifiers are authoritative, and what merge constraints are required for risk management. Platform architecture and data engineering own implementation details: pipelines, performance, testing, and operational monitoring. Marketing operations and analytics stakeholders should be involved because they experience the downstream effects of identity changes in segmentation and reporting. Privacy and security stakeholders must have explicit approval points when identity resolution affects consent propagation, retention, or cross-region data movement. We formalize this as a RACI model and a change workflow. Identity rule changes should have documented rationale, expected impact, validation results, and an approval trail. This reduces ad-hoc changes made under delivery pressure and makes identity behavior stable and explainable over time.

Auditability requires lineage at both the identity and attribute levels. For identity links, we capture why a match occurred: which identifiers matched, which rule version was applied, and the timestamp and source of the contributing records. For probabilistic links, we record the signals used and the confidence score or threshold that triggered the link. For profile attributes, we define survivorship rules and store provenance: which source provided the current value, what precedence logic selected it, and what alternative values were available. This is especially important for regulated environments and for operational debugging when stakeholders question why a profile changed. We also recommend versioning identity rules and publishing release notes that summarize changes and expected impacts. Combined with monitoring metrics, this creates a traceable chain from a business question (“why did this customer move segments?”) to the underlying identity events and rule decisions that caused the change.

Over-merging is usually caused by treating weak or shared identifiers as unique. We mitigate this by classifying identifiers by strength and context, then restricting which identifiers can drive merges. For example, we may allow merges on verified login IDs and confirmed emails, but treat unverified emails, shared phone numbers, or device signals as relationships that add context rather than merge authority. We also implement merge constraints and anomaly detection. Constraints can include limits on how many distinct people can share an identifier, rules that prevent merges across incompatible account contexts, and quarantine paths when a record triggers conflicting signals. Monitoring focuses on spikes in merge rate, unusually large identity clusters, and sudden changes in identifier coverage. Finally, we define unmerge policies and operational procedures. Even with good design, edge cases occur. Having a documented and tested unmerge path reduces the long-term damage of a bad rule and makes teams more confident in evolving the identity layer safely.

Privacy constraints shape what identifiers you can store, how long you can retain them, and how you can link identities across contexts. We start by mapping consent categories and lawful bases to identity operations: collection, linking, enrichment, and activation. This determines which edges in the identity graph are permitted and under what conditions. Retention policies affect both identifiers and derived relationships. If an identifier must be deleted after a period, the identity graph must support edge expiration and downstream propagation so that activation and analytics do not continue using stale links. We also consider regional data residency: identity resolution may need to run in-region, or identity outputs must be partitioned to avoid cross-border linkage. We design for minimization and separation of concerns: store only required identifiers, prefer hashed or tokenized forms where appropriate, and ensure consent state is part of the identity contract. The goal is to make compliance enforceable by architecture, not dependent on manual process.

Deliverables are designed to be implementable by engineering teams and usable for governance. Typically this includes an identity domain model (entities, relationships, cardinality), an identifier taxonomy with normalization and validation rules, and a match/merge specification covering deterministic rules, optional probabilistic inputs, and merge constraints. We also deliver survivorship rules for key attributes, including precedence and lineage requirements, plus unmerge/split policies and exception handling. On the operational side, we provide monitoring metrics, alert thresholds, and runbooks for investigating anomalies and responding to incidents. Finally, we produce an operationalization plan: where identity resolution runs, how identity versions are published, data contracts for source onboarding, and a phased rollout backlog aligned to priority use cases. The intent is that teams can move directly from strategy to implementation without re-interpreting ambiguous guidance.

Timing depends on the number of sources, complexity of identity semantics, and the level of governance required. For a focused scope with a handful of primary sources (e.g., CRM, web/app events, email platform), strategy definition and specifications commonly take 4–8 weeks. This includes discovery, modeling, rule design, and validation planning. For larger enterprises with many sources, multiple regions, and strict compliance requirements, the work may extend to 8–12 weeks or be structured as phases. In those cases, we prioritize a minimal viable identity model and deterministic rules for the highest-value use cases, then expand coverage iteratively. We also distinguish between “strategy complete” and “fully implemented.” Implementation and rollout often run in parallel with strategy work once the core decisions are made, especially when teams need to stabilize Customer 360 outputs quickly. We plan milestones around decision points and measurable identity metrics rather than arbitrary dates.

Deterministic matching is preferred when you have strong, verified identifiers and when identity outputs drive operational actions such as suppression, consent enforcement, or regulated communications. Deterministic rules are easier to explain, test, and audit, and they produce stable behavior that downstream teams can rely on. Probabilistic matching can be useful when deterministic identifiers are sparse, especially for analytics use cases that benefit from broader linkage (e.g., cross-device measurement). The key is to constrain where probabilistic links are allowed and to avoid using them as authoritative merges for operational profiles unless you have strong governance and clear risk acceptance. We typically recommend a layered approach: deterministic identity as the core Customer 360, with probabilistic relationships represented as separate edges or annotations that can be used selectively. This allows teams to gain value from weaker signals without contaminating the canonical identity layer or creating hard-to-reverse merges.

We version identity rules the same way you version APIs: with explicit releases, documented changes, and compatibility expectations. Each rule set has an identifier (version number or date-based tag), and identity outputs include the version used to compute matches and survivorship. This makes it possible to reproduce results and compare behavior across releases. Backward compatibility depends on downstream dependencies. If reporting and activation systems cannot tolerate sudden changes, we recommend publishing identity outputs in parallel for a period (v1 and v2) and providing mapping guidance for consumers. For example, segments may be computed against v2 while dashboards continue to use v1 until stakeholders validate the deltas. We also define deprecation policies: how long old versions remain available, how to migrate consumers, and what metrics must be stable before retiring a version. This reduces operational risk and prevents identity logic from becoming an unmanageable set of one-off exceptions.

Identity resolution is highly sensitive to data quality because small inconsistencies in identifiers can produce large downstream effects. Common issues include unnormalized emails and phones, placeholder values, recycled identifiers, inconsistent account keys, and late-arriving updates. These lead to under-linking (fragmented profiles) or over-linking (incorrect merges). We address this by defining validation and normalization as part of the identity contract, not as an optional cleanup step. Sources must meet minimum quality thresholds to participate in matching, and we specify how to handle invalid or ambiguous identifiers (reject, quarantine, or treat as non-mergeable context). We also recommend ongoing quality monitoring tied to identity metrics: identifier coverage by source, invalid rate, and changes in match yield. When quality drifts, teams can isolate the source and prevent it from driving merges until it is corrected. This keeps the identity layer stable even when upstream systems are imperfect.

Collaboration typically begins with a short alignment and discovery phase focused on scope and evidence. We start with stakeholder interviews across data, architecture, marketing operations, analytics, and privacy to confirm the priority use cases and the decisions that must be made (identity semantics, merge constraints, consent propagation). In parallel, we request a lightweight inventory of sources, identifiers, and current stitching logic. Next, we run a working session to map the current identity flow end-to-end: where identifiers are created, how they are transformed, where merges happen today, and which downstream systems consume identity outputs. We also agree on baseline metrics to measure change, such as duplicate rate and profile churn. From there, we propose a phased plan with clear decision points, required inputs, and a delivery cadence that fits your teams. The first tangible outputs are usually the identity domain model and identifier taxonomy, followed by match/merge specifications and governance controls that engineering can implement incrementally.

These case studies showcase practical implementations of identity resolution strategies and customer data platform integrations. They highlight approaches to unify customer identifiers, enforce data governance, and enable consistent Customer 360 profiles across complex digital ecosystems. The selected work demonstrates scalable architectures, match/merge logic, and operational governance aligned with the identity resolution strategy service.

These articles expand on the governance, implementation, and operational choices that shape a reliable identity resolution program. They cover schema contracts, merge-risk pitfalls, consent enforcement, and activation ownership so you can see how identity strategy holds up in practice.

Let’s review your current identity stitching, clarify decision points, and produce implementable rules and governance for reliable Customer 360 profiles.