[01]

[01]Discovery and Audit

Review current CDP configuration, sources, destinations, and operational pain points. Inventory event schemas, tracking plans, identity rules, and transformations to establish a baseline and identify high-risk areas.

CDP platform architecture design defines how customer events are collected, validated, enriched, resolved into identities, and delivered to downstream systems with clear governance. If you’re asking how to architect a customer data platform, this covers the end-to-end design: tracking taxonomy, event transport, schema management, identity strategy, consent enforcement, and activation integrations.

Organizations need this capability when multiple products, channels, and teams generate customer data with inconsistent definitions and uneven quality. Without an explicit architecture, CDP implementations tend to grow as a set of ad-hoc connectors and tracking scripts, making it difficult to trust metrics, control privacy, or evolve the platform safely.

A well-defined architecture provides stable interfaces (data contracts), predictable operational behaviors (replay, backfill, failure handling), and a governance model that scales across engineering and analytics. It enables platform teams to treat customer data as an engineered system: observable, testable, and maintainable as the CDP ecosystem expands.

As digital platforms scale, customer data collection usually expands faster than the underlying architecture. Teams add new events, properties, and destinations to meet immediate product and reporting needs. Over time, the CDP becomes a mix of inconsistent naming, duplicated semantics, and undocumented transformations, with different teams interpreting the same event differently.

Architecturally, this creates fragmentation across collection SDKs, routing rules, identity logic, and downstream mappings. Without explicit data contracts and lifecycle controls, schema drift becomes normal, backfills are risky, and identity stitching produces unstable profiles. Engineering teams spend significant time debugging “why numbers changed,” reconciling event definitions, and handling edge cases like retries, ordering, and partial failures.

Operationally, the platform becomes difficult to govern. Privacy and consent requirements are hard to enforce consistently across sources and destinations. Changes to tracking or routing can introduce silent breakage, and incident response is slowed by limited observability into event throughput, delivery guarantees, and transformation behavior. The result is slower delivery, higher maintenance overhead, and reduced confidence in analytics and activation workflows.

Assess current CDP setup, event sources, destinations, identity approach, and operational constraints. Review tracking plans, schemas, routing rules, and data consumers to identify inconsistencies, bottlenecks, and risk areas.

Define event taxonomy aligned to product domains and customer journeys. Establish naming conventions, required properties, versioning rules, and ownership boundaries so teams can evolve events without breaking downstream consumers.

Design the target CDP architecture across collection, transport, transformation, identity, and activation. Specify system boundaries, data contracts, SLAs, and failure modes, including replay/backfill strategies and environment separation.

Map integrations to warehouses, analytics tools, marketing platforms, and internal services. Define routing patterns, transformation responsibilities, and destination-specific constraints, including rate limits, PII handling, and data minimization.

Implement governance workflows for event changes, approvals, and documentation. Define roles, ownership, and controls for schema evolution, access management, consent enforcement, and auditability across teams.

Specify metrics, logs, and traces for event throughput, delivery success, latency, and transformation errors. Establish alerting thresholds, dashboards, and incident runbooks to support reliable operations.

Introduce automated checks for schema conformance, required fields, and destination mappings. Define test datasets and replay scenarios to validate identity behavior, transformations, and downstream compatibility before rollout.

Create a phased implementation plan with migration steps, deprecation policies, and measurable milestones. Align platform evolution with product delivery cycles and long-term maintainability requirements.

This service establishes the technical foundations required to run a CDP as a governed platform rather than a collection of connectors. It focuses on CDP event pipeline and identity resolution architecture, event semantics, schema lifecycle, and integration patterns that remain stable as volume, teams, and destinations grow. The result is an architecture that supports reliable delivery, controlled change, and operational visibility across the customer data supply chain.

Engagements are structured to produce an implementable CDP platform architecture design with clear contracts, governance, and operational controls. We work with platform, data, and product engineering to align event semantics, integration boundaries, and reliability requirements, then translate decisions into a phased roadmap and implementation-ready specifications.

[01]

[01]Review current CDP configuration, sources, destinations, and operational pain points. Inventory event schemas, tracking plans, identity rules, and transformations to establish a baseline and identify high-risk areas.

[02]

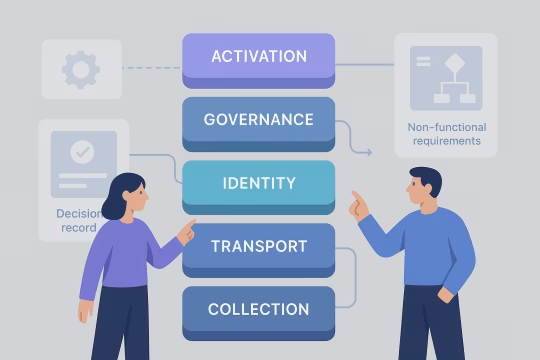

[02]Design the target architecture and its boundaries across collection, transport, identity, governance, and activation. Document decision records, non-functional requirements, and failure-mode expectations to guide implementation.

[03]

[03]Define event contracts, naming conventions, and ownership. Produce a tracking taxonomy and schema lifecycle rules that teams can apply consistently across products and channels.

[04]

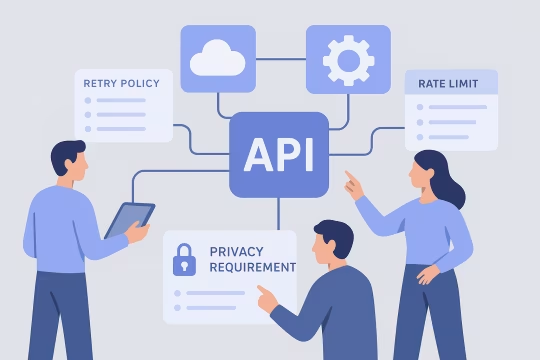

[04]Specify destination mappings, routing rules, and transformation responsibilities. Address destination constraints, privacy requirements, and operational behaviors such as retries, rate limits, and backfills.

[05]

[05]Establish workflows for proposing, reviewing, and releasing event changes. Define access controls, consent enforcement points, and documentation standards to keep the platform maintainable across teams.

[06]

[06]Implement monitoring requirements and data quality checks for schema conformance, delivery health, and identity anomalies. Provide dashboards, alerts, and runbooks aligned to platform operations.

[07]

[07]Plan and support phased migrations, including parallel runs, validation, and deprecation of legacy events. Reduce risk by sequencing changes and providing rollback and replay strategies.

[08]

[08]Set up a cadence for reviewing taxonomy drift, destination changes, and platform performance. Maintain architectural integrity through periodic audits, backlog grooming, and updates to contracts and governance.

A clear CDP architecture reduces ambiguity in customer data, stabilizes downstream activation, and makes change safer as the ecosystem grows. The impact is primarily realized through fewer data incidents, faster onboarding of new sources and destinations, and improved confidence in analytics and customer experiences.

Teams ship tracking changes with clear contracts and ownership, reducing rework and review loops. Standardized taxonomy and versioning reduce the time spent aligning semantics across products.

Consistent event definitions and validation reduce metric volatility and conflicting interpretations. Stakeholders can rely on stable schemas and documented transformations when making decisions.

Defined failure modes, replay strategies, and runbooks reduce the blast radius of destination outages and configuration errors. Observability enables earlier detection and faster incident response.

Architected routing, identity, and transformation patterns support higher event volumes and more destinations without uncontrolled complexity. Platform boundaries make it easier to scale teams and workloads independently.

Governed schema evolution and deprecation policies prevent long-lived legacy events and one-off mappings. Architectural decision records keep the platform coherent as requirements change.

Consent enforcement points and PII handling patterns reduce inconsistent data exposure across destinations. Access controls and auditability support regulated environments and internal governance needs.

Stable destination mappings and identity behavior improve the reliability of audience building and downstream personalization. Changes become traceable and testable before they impact campaigns or experiences.

Adjacent services that extend Enterprise CDP engineering into identity resolution architecture, tracking governance, and operational reliability across the customer data ecosystem.

Enterprise CRM data synchronization and identity mapping

Event-driven journeys across channels and products

CDP audience activation with governed delivery to channels

Audience sync activation engineering for CDP activation

CDP real-time decisioning design for real-time experiences

Customer analytics platform implementation for governed metrics and behavioral analytics

Unified customer profile architecture and insight-ready datasets

Scalable enterprise audience segmentation models and cohort definition frameworks

Consistent experiment tracking, metrics, and attribution

Unified customer profile design across identities and events

Customer profile and event schema engineering

CDP identity resolution design for unified customer profiles

Answers to common questions on how to architect a customer data platform, including CDP data governance and privacy architecture, integration patterns, operational controls, and engagement approach.

CDP platform architecture defines the end-to-end system design that sits around and within a CDP tool. Beyond configuring sources and destinations, it specifies event semantics (taxonomy and data contracts), schema lifecycle and versioning, identity resolution rules, transformation boundaries, consent enforcement, and operational behaviors such as retries, replay, and backfills. It also covers how the CDP interacts with the broader data platform: warehouses/lakehouses, streaming infrastructure, catalogs, experimentation, analytics engineering models, and activation endpoints. A key output is a reference architecture that makes responsibilities explicit: what is owned by product teams, what is owned by the platform/data team, and what is enforced automatically. In practice, this reduces “configuration sprawl” by introducing stable interfaces and governance. The goal is to make the CDP behave like an engineered platform with predictable change management, observability, and clear failure modes, rather than a set of ad-hoc connectors that are hard to reason about at enterprise scale.

We treat events as contracts between producers (apps/services) and consumers (analytics, activation, data science). The design starts with domain modeling: define the business concepts and journeys that events represent, then map them to a consistent naming convention and property model. Required vs optional properties are explicit, and ownership is assigned so changes have accountable reviewers. Versioning is designed to minimize breaking changes. Common patterns include additive changes (new optional properties), controlled deprecations with timelines, and explicit event version fields when destinations or consumers cannot tolerate schema drift. We also define compatibility rules and validation gates so producers cannot emit events that violate required fields or types. For long-lived products, the architecture includes a deprecation policy, documentation standards, and migration playbooks (parallel events, dual-write periods, and backfill/replay strategies). This keeps the event layer evolvable without forcing large, risky “tracking rewrites.”

Reliable CDP operations require controls across throughput, delivery guarantees, transformation behavior, and identity stability. We define observability for event volume, latency, delivery success/failure by destination, transformation error rates, and schema violation counts. These signals are paired with alert thresholds and on-call runbooks so incidents are actionable. We also design operational mechanisms for replay and backfill. That includes where raw events are retained, how to reprocess transformations deterministically, and how to avoid duplication (idempotency keys, ordering assumptions, and deduplication strategies). For destinations with rate limits or strict schemas, we define buffering and retry strategies and how failures are surfaced. Finally, we establish environment separation (dev/stage/prod), release management for configuration changes, and audit trails. The goal is to make CDP changes observable and reversible, and to reduce the time spent diagnosing downstream issues caused by silent routing or schema drift.

We define observability at three layers: pipeline health, contract compliance, and consumer impact. Pipeline health includes throughput, lag/latency, delivery success rates, and destination-specific error categories. Contract compliance includes schema validation, required-field presence, type checks, and controlled vocabularies for key properties. Data quality is most effective when it is automated and close to the point of change. We introduce validation gates in CI where possible (for tracking plan changes and schema updates) and runtime checks where necessary (for live event streams). We also recommend sampling and anomaly detection for high-volume streams to detect sudden shifts in event counts, property distributions, or identity merge rates. To connect quality to outcomes, we map key events to critical reports and activation flows and monitor those dependencies. This helps teams prioritize fixes based on impact rather than treating all schema violations as equal severity.

We start by defining the system of record for raw events and the boundary for transformations. A common pattern is to retain immutable raw events (either in the CDP’s export or a streaming sink) and perform durable modeling in the warehouse/lakehouse, while keeping CDP-side transformations minimal and focused on activation needs. We design a clear mapping between event contracts and warehouse tables, including partitioning, late-arriving event handling, and deduplication rules. If reverse ETL or audience activation depends on modeled tables, we ensure the modeling layer is versioned and tested, and that changes are communicated through governance workflows. The architecture also addresses operational realities: backfills, reprocessing, and reconciliation between CDP exports and warehouse ingestion. The goal is to avoid “two sources of truth” where the CDP and warehouse apply different transformations that drift over time, producing inconsistent metrics and audiences.

We design consent and privacy as first-class constraints in the event contract and routing architecture. That includes defining which properties are PII, which events are sensitive, and how consent state is represented and propagated. We then specify enforcement points: at collection (SDK), at ingestion (CDP), and at activation (destination routing). A practical approach is to minimize PII in event payloads, use pseudonymous identifiers where possible, and centralize consent state in a system that can be referenced consistently. Routing rules must ensure that events or properties are filtered based on consent and regional policies before they reach destinations. We also define auditability: what was collected, what was activated, and why. This includes change logs for routing rules, access controls for who can modify destinations, and retention policies for raw events. The goal is consistent enforcement across channels without relying on manual processes.

Governance works when it is lightweight, explicit, and automated where possible. We define ownership for event domains and a workflow for proposing changes: new events, property additions, renames, and deprecations. Each change has reviewers (typically platform/data plus domain owners) and clear acceptance criteria tied to the event contract. We also establish documentation standards and a single source of truth for the tracking plan and schemas. Where the CDP supports it, we align configuration to that source; where it does not, we create a controlled process to keep documentation and configuration synchronized. To keep governance from becoming a bottleneck, we introduce tiers of change. Additive, low-risk changes can be fast-tracked, while breaking changes require migration plans, dual-write periods, and consumer sign-off. The objective is to enable frequent change while preventing silent breakage in analytics and activation.

Schema drift is primarily an ownership and lifecycle problem, not just a tooling problem. We address it by defining domain boundaries and assigning accountable owners for event groups. Each domain has conventions, required properties, and compatibility rules, so teams have a shared framework for change. We then implement drift detection and enforcement. That includes schema validation (types, required fields), naming convention checks, and monitoring for unexpected property emergence. For high-impact events, we recommend stronger controls such as pre-release validation in staging environments and controlled rollouts. Finally, we define deprecation and cleanup mechanisms. Drift often accumulates because nothing is removed. A deprecation policy with timelines, dashboards showing usage by consumers, and migration playbooks (including backfills where necessary) keeps the schema surface area manageable. Over time, this reduces the cognitive load for teams onboarding to the CDP ecosystem.

The main risks are breaking downstream consumers, losing historical continuity, and introducing identity instability. Downstream breakage happens when event names, property types, or destination mappings change without a compatibility plan. Historical continuity is at risk when new schemas are not reconciled with existing warehouse models and reporting definitions. Identity instability is often underestimated. Changes to identifier precedence, merge rules, or the timing of identity events can materially change profile counts and audience membership. That can impact marketing activation and experimentation results, even if the event stream “looks correct.” We mitigate these risks with phased migrations: parallel events or dual-write periods, validation against known reports, controlled rollouts by source or domain, and explicit rollback plans. We also define replay/backfill strategies early so teams can correct issues without manual patching. The goal is to evolve architecture while maintaining operational continuity and stakeholder confidence.

We separate the conceptual model (contracts, taxonomy, identity rules, governance) from tool-specific configuration. The conceptual model is documented in a tool-agnostic way: event definitions, versioning rules, transformation specifications, and destination mappings. Where possible, we keep critical logic in portable layers such as warehouse transformations or shared libraries rather than proprietary UI-only rules. For CDP-specific features that provide real value (e.g., certain identity or routing capabilities), we document them as explicit architectural decisions with alternatives and constraints. We also recommend retaining raw events in a durable store outside the CDP so reprocessing and migration remain feasible. The practical outcome is optionality: you can change tools without redefining your event language or rebuilding every integration. Even when you stay on the same CDP, this approach improves maintainability because the platform is governed by contracts and documentation rather than tribal knowledge embedded in configuration screens.

Deliverables are designed to be implementable by platform and product teams. Typical outputs include a reference architecture showing system boundaries and data flows; an event taxonomy and tracking plan with naming conventions, required properties, and ownership; and a schema lifecycle model covering versioning, deprecation, and compatibility rules. We also provide integration designs for key destinations and warehouse/lakehouse ingestion, including transformation boundaries and operational behaviors (retries, buffering, replay/backfill). Governance deliverables include change workflows, access control patterns, consent enforcement points, and documentation standards. Operational deliverables include observability requirements (dashboards, alerts, key metrics), runbooks for common failure modes, and an implementation roadmap with phased migration steps. If you already have an implementation in place, we include a gap analysis and prioritized remediation plan tied to risk and platform impact.

Collaboration typically begins with a short discovery phase focused on understanding your current CDP landscape and the decisions you need to make. We start with stakeholder interviews across platform, data engineering, analytics, and activation owners, then review existing artifacts such as tracking plans, schema documentation, CDP configuration exports, and key downstream models or dashboards. Next, we run a structured audit of event sources and destinations: what is collected, how it is transformed, how identity is resolved, and where failures occur. We also identify constraints such as regulatory requirements, organizational ownership boundaries, and delivery timelines. From there, we align on scope and success criteria and propose a phased plan: immediate stabilization actions (if needed), target architecture definition, and a roadmap for implementation and migration. This ensures early clarity on priorities and reduces the risk of producing architecture that cannot be executed within your operating model.

These case studies showcase real-world implementations of customer data platform architectures, focusing on event pipeline design, identity resolution, governance, and activation integrations. They highlight how enterprise teams have standardized data flows, ensured operational reliability, and enabled scalable customer data ecosystems across multiple products and channels. The selected examples provide measurable proof of architectural strategies and governance models that align closely with CDP platform architecture principles.

These articles expand on the architecture and governance decisions behind a reliable CDP platform. They cover schema evolution, contract ownership, consent enforcement, and activation patterns that help customer data flows stay stable as the platform grows.

Let’s review your current CDP flows, event contracts, identity strategy, and operational controls, then define an implementable target architecture and migration roadmap.