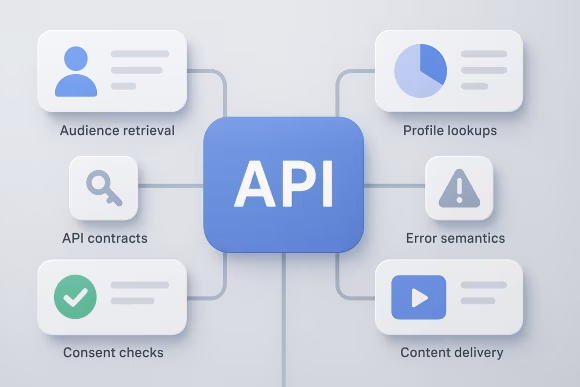

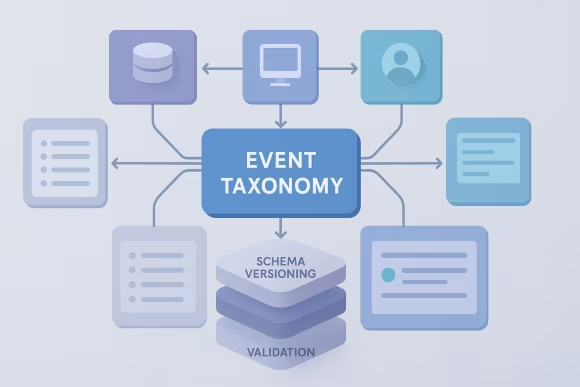

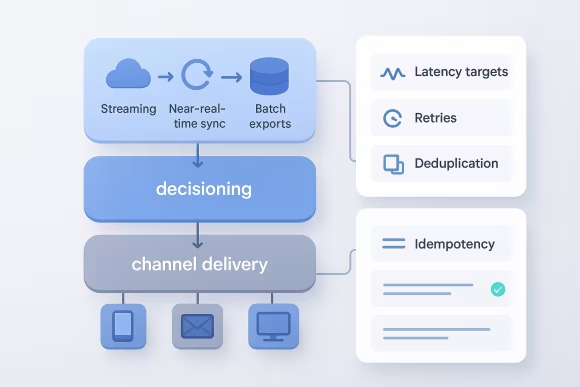

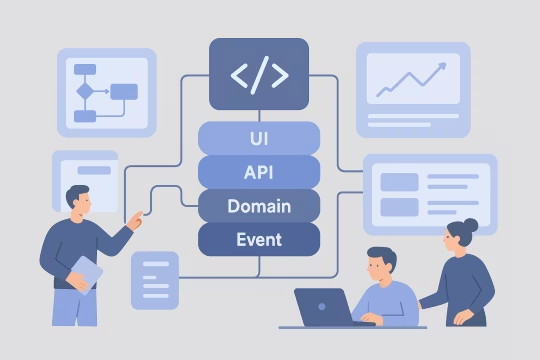

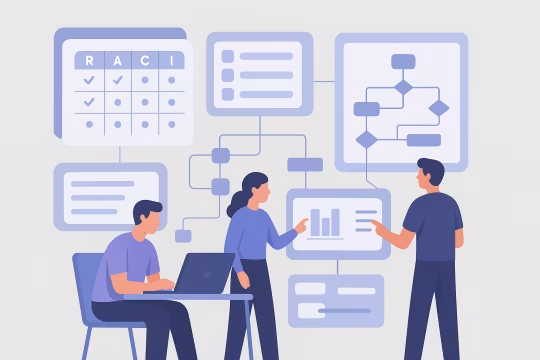

Composable martech architecture design defines how marketing capabilities are assembled from specialized tools while remaining coherent as a platform. Instead of a single suite, organizations compose a CDP, analytics, experimentation, personalization, messaging, DAM, and content systems through well-defined APIs, events, and shared identity and consent models. This creates an API-first martech ecosystem design that can scale across teams and vendors when contracts and ownership are explicit.

Organizations need this capability when tool sprawl, overlapping responsibilities, and inconsistent data contracts start to slow delivery and increase risk. A composable approach can improve agility, but only when the architecture establishes clear domain boundaries (profiles, audiences, content, offers, journeys), integration patterns (sync vs event-driven), and an operational model for martech platforms that clarifies ownership and change control.

This service focuses on creating a platform-level blueprint that supports scalable evolution: marketing technology reference architecture, integration standards, governance, and an operating model that aligns marketing, data, and engineering teams. The result is an ecosystem that can change vendors and add capabilities without repeatedly reworking identity, tracking, activation pipelines, and compliance controls.

[01]

[01]