Most design systems do not fail because the components are bad.

They fail because the organization expects adoption to happen as a matter of principle while product teams are measured on something else.

A central team can build a strong component library, publish documentation, define tokens, and run reviews. But if product teams are still rewarded for shipping local solutions faster, protecting roadmap commitments, and avoiding external dependencies, the system will be treated as optional. In practice, optional usually means partial adoption, delayed adoption, or symbolic adoption.

That is why design system adoption is not primarily a documentation problem. It is an operating model problem.

In enterprise environments, product teams make rational decisions based on delivery pressure, ownership boundaries, support expectations, and the cost of change. If the design system does not fit those realities, even a technically solid system can stall.

This is especially common in enterprise design systems, where multiple product lines, release cadences, and architecture constraints create friction that a component catalog alone cannot solve.

The common misunderstanding: quality does not create adoption by itself

Design system teams often assume adoption follows a simple sequence:

- Build reusable components.

- Document them well.

- Socialize the benefits.

- Watch teams migrate.

That sequence sounds reasonable, but it leaves out the actual decision point inside product delivery.

A product team does not ask, "Is this system well designed?"

It asks:

- Will this help us hit our delivery target?

- Will it reduce or increase implementation risk?

- Can we get support when the system does not fit our use case?

- Are we allowed to influence the roadmap?

- Who absorbs the cost of migration?

- What happens if the system blocks a release?

If those questions do not have credible answers, adoption slows down.

This is why many organizations overestimate the value of awareness campaigns and underestimate the importance of incentives and workflow design. Teams rarely resist a system because they disagree with standardization in theory. They resist when standardization creates local cost without local benefit.

Adoption barriers are usually structural, not cultural

It is easy to describe low adoption as a culture issue. That framing is often too vague to be useful.

A more operational view is to look for structural barriers in the delivery system.

Common barriers include:

- Product teams are measured on feature throughput, not platform alignment.

- Migration work is not funded or scheduled.

- The design system roadmap is disconnected from product roadmap timing.

- Contribution requires too much ceremony or too much specialized knowledge.

- Teams cannot get timely answers when components do not meet real requirements.

- Governance is enforced late through review rather than early through enablement.

- The system team owns standards but not enough implementation support.

- Exceptions are easier than adoption.

None of these are abstract culture problems. They are operating decisions.

If a team can ship a custom component in two days but needs three weeks to request, discuss, and wait for a system change, the organization has already chosen fragmentation. The custom component is not a failure of discipline. It is the predictable result of the current process.

Product teams respond to incentives, whether explicit or implicit

Every delivery organization has an incentive model, even if it is undocumented.

If teams are praised for speed, they optimize for speed.

If they are penalized for defects, they optimize for local control.

If they are judged by roadmap predictability, they avoid dependencies that introduce uncertainty.

A design system becomes adoptable when it aligns with those incentives instead of competing with them.

That usually means the system must create visible advantages for product teams, such as:

- Faster implementation of common UI patterns

- Lower accessibility and consistency risk

- Reduced design and engineering rework

- Better upgrade paths than local component ownership

- Clear support channels when edge cases appear

- A realistic way to influence the system when product needs are valid

The key point is simple: teams adopt what helps them deliver.

If the system is framed only as a compliance requirement, adoption will be shallow. Teams may use the visible parts of the system while recreating behavior, styling, or workflows underneath. That creates the appearance of alignment without the operational benefits.

Governance should be tied to delivery behavior

Design system governance is often treated as a set of standards, review checkpoints, and approval rules. Those things matter, but they are not enough.

Governance should answer a more practical question: how does the organization want delivery teams to behave when they need UI patterns, component changes, or exceptions?

A useful governance model defines:

- What teams must use by default

- What teams can extend locally

- What requires contribution back to the system

- What qualifies as a valid exception

- Who decides, within what timeframe

- How decisions are documented and revisited

This matters because governance without delivery pathways becomes a bottleneck. Teams then route around it.

A better model is to make the preferred behavior the lowest-friction behavior.

For example:

- If a standard component exists, it should be easier to consume than to rebuild.

- If a needed variation is missing, there should be a lightweight intake path.

- If the variation has broad value, there should be a clear contribution path.

- If the request is truly product-specific, local extension rules should be explicit.

- If an exception is granted, it should have an owner and a review date.

This is where governance becomes operational rather than aspirational.

A design system contribution model is often the missing layer

Many systems have usage guidance but a weak design system contribution model.

That gap is costly.

When product teams find a mismatch between the system and real delivery needs, they need more than a statement that contributions are welcome. They need a path that is understandable, time-bounded, and compatible with normal sprint planning.

A workable contribution model usually includes four stages.

1. Intake

Teams need a simple way to submit:

- Missing component requests

- Variant requests

- Accessibility or usability issues

- API improvements

- Documentation gaps

- Token or theming needs

The intake should capture enough context to evaluate the request:

- Product use case

- User need

- Frequency across products

- Current workaround

- Delivery timeline

- Design and engineering constraints

Without this context, the system team cannot prioritize well. Without a standard intake path, requests arrive informally and disappear into chat threads or meetings.

2. Triage

Not every request should become a system change.

Triage should classify requests into categories such as:

- Documentation issue

- Bug fix

- Local extension allowed

- Candidate for shared pattern

- Requires design exploration

- Not aligned with system principles

This step is important because it protects the system from becoming a dumping ground for every product-specific need while still giving teams a fair hearing.

The triage outcome should also be fast. If teams wait too long for a decision, they will implement locally and move on.

3. Delivery path

Once a request is accepted, the organization needs a clear answer to a practical question: who does the work?

There are several valid models:

- The central system team designs and builds it.

- The product team contributes implementation with system team review.

- A paired model splits design and engineering responsibilities.

- A federated team owns the work for a domain and contributes upstream.

The right model depends on team maturity and capacity. What matters is that the model is explicit.

A contribution process fails when product teams are told to contribute but are not given design system architecture support such as:

- Coding standards

- Design review expectations

- Testing requirements

- Release process guidance

- Maintainer support

In that situation, contribution becomes unpaid platform work with unclear acceptance criteria. Most teams will avoid it unless they have no alternative.

4. Adoption and maintenance

Contribution is not complete when code is merged.

The system team should define how new capabilities are:

- Released

- Documented

- Communicated

- Versioned

- Supported after launch

If teams contribute once and then discover they are effectively long-term maintainers of a shared asset without agreement, future contributions will decline.

A healthy contribution model makes ownership boundaries visible from the start.

Delivery support matters as much as standards

A common anti-pattern in enterprise design systems is to centralize standards while decentralizing all migration and implementation risk.

That sounds efficient on paper, but it often produces low adoption.

Product teams usually need delivery support in at least three areas.

Migration planning

Adoption often requires replacing local components, updating patterns, or aligning design and engineering workflows. That work competes with roadmap commitments.

If leadership wants adoption, migration cannot be treated as invisible background work. Teams need:

- Scope estimates

- Sequencing guidance

- Dependency visibility

- Upgrade notes

- A realistic way to phase changes over time

A system team does not need to do every migration, but it should reduce the planning burden.

Implementation support

Even well-designed systems create edge cases.

Teams need reliable support for questions like:

- How should this component be composed in a complex flow?

- Is this a valid extension or a misuse?

- How should tokens be applied in this product context?

- What is the recommended fallback when the component API does not fit?

If support is slow or inconsistent, teams will create local patterns to keep moving.

Release confidence

Product teams are more likely to adopt a system when they trust its release process.

That trust usually comes from:

- Predictable versioning

- Clear changelogs

- Backward compatibility guidance

- Deprecation windows

- Testing expectations

- Fast response to regressions

Without that, the system feels like an external risk source rather than a delivery accelerator.

Make adoption the default path, not the heroic path

A useful test for any design system program is this: does adoption require exceptional effort from product teams?

If the answer is yes, adoption will remain uneven.

The goal is not to force every team into strict uniformity. The goal is to make the system the default path for common needs and a practical platform for shared evolution.

That usually means reducing friction in the full lifecycle:

- Discovering the right component

- Understanding when to use it

- Installing and integrating it

- Extending it safely

- Requesting changes

- Contributing improvements

- Upgrading over time

When those steps are easier than local reinvention, adoption becomes more durable.

When they are harder, governance turns into negotiation.

Measuring health: adoption is more than usage counts

Teams often measure design system adoption with a single number, such as the percentage of products using the component library. That can be useful, but it is incomplete.

A healthier measurement approach looks at both coverage and operating behavior.

Useful signals can include:

- Number of products using the system in production

- Depth of usage across core components or tokens

- Rate of local overrides or forks

- Time to triage incoming requests

- Time from accepted request to released capability

- Number of approved exceptions and how long they remain open

- Migration backlog size

- Upgrade lag across consuming products

- Contribution volume and acceptance rate

- Support response time for delivery blockers

These measures are more informative because they show whether the system is functioning as a platform.

For example, high usage with high override rates may indicate nominal adoption but weak fit. Low contribution volume may indicate stability, or it may indicate that teams do not believe the contribution path is worth the effort. A growing exception backlog may signal that governance is disconnected from product reality.

The point is not to create a dashboard for its own sake. The point is to observe whether the system is changing delivery behavior in the intended direction.

What leadership should do differently

If adoption is a strategic goal, leadership has to treat it as a delivery system concern, not just a design quality initiative.

That often means making a few decisions explicit.

Fund adoption work

If migration and alignment work are required, they need capacity. Otherwise teams will defer them indefinitely.

Set expectations for shared standards

Teams should know which parts of the system are mandatory, which are recommended, and where flexibility is acceptable.

Reward contribution, not just consumption

If product teams help improve shared assets, that work should be recognized as valuable delivery work, not side labor.

Hold the system team accountable for service quality

A central team should not be measured only by output such as number of components shipped. It should also be measured by responsiveness, usability, and the ease with which product teams can adopt and extend the system.

Align roadmap timing

If major product initiatives are coming, the system roadmap should anticipate the patterns and capabilities those initiatives will need.

These are practical management choices. They do more for adoption than another awareness session or another documentation refresh on their own.

A pragmatic operating model for adoption

For organizations trying to improve adoption without overcorrecting into heavy process, a pragmatic model often looks like this:

- Define a small set of mandatory foundations, such as tokens, accessibility baselines, and core components.

- Publish explicit extension rules so teams know what can remain local.

- Create a lightweight request intake and triage process with clear response times.

- Offer a documented contribution path with templates, standards, and maintainer support.

- Provide migration guidance for high-value adoption targets.

- Track exceptions, overrides, and support demand as leading indicators.

- Review governance decisions against real delivery outcomes every quarter.

This kind of model respects a basic truth of enterprise delivery: standardization works best when it is opinionated about shared value and realistic about local variation.

Final thought

A design system is not adopted because people agree it is important.

It is adopted when product teams can use it to deliver with less risk, less duplication, and less friction than building alone.

That is why strong components and good documentation, while necessary, are not sufficient. Without aligned incentives, a credible design system contribution model, and real delivery support, adoption remains fragile.

The organizations that succeed tend to treat the system as part of how products get built, not as a parallel initiative asking teams to behave better. They tie design system governance to actual delivery behavior. They make contribution possible. They reduce the cost of doing the right thing.

In the end, that is what sustainable design system adoption looks like: not enthusiasm around the system itself, but a delivery environment where using and improving the system is the most practical choice for the teams shipping products.

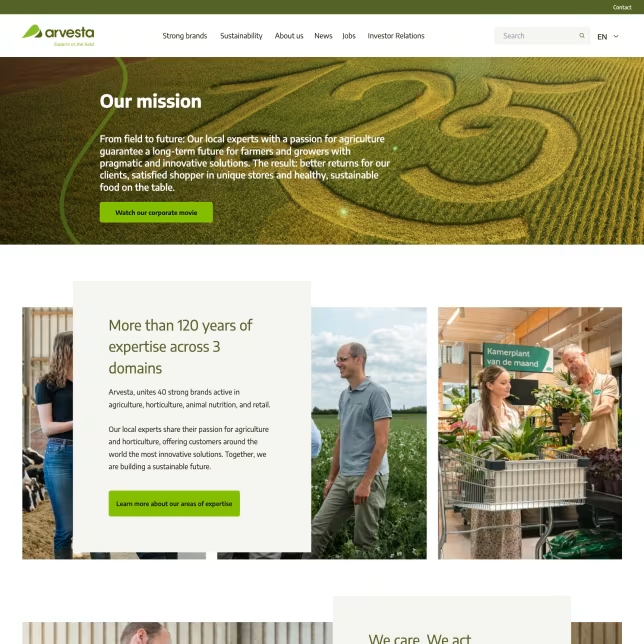

A practical example of that pattern can be seen in Arvesta, where Storybook-based UI governance and shared component workflows helped reinforce consistency across teams without separating standards from delivery reality.

Tags: design system adoption, enterprise design systems, design system governance, design system contribution model, frontend architecture, product delivery