When enterprise teams talk about analytics quality, they often focus on tags, tools, or dashboards. But the deeper issue usually sits one layer below that: ownership.

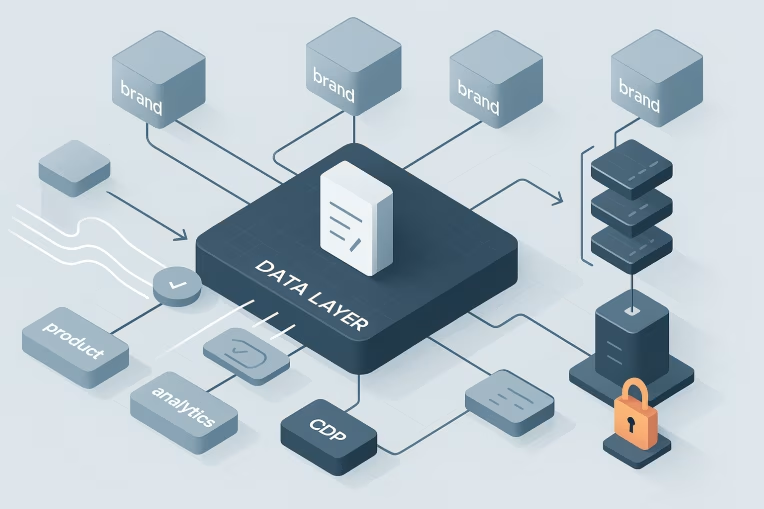

A web data layer is not just a technical structure for passing page and interaction data. It is the shared interface between product decisions, frontend behavior, analytics reporting, CDP ingestion, activation use cases, and experimentation workflows. When that interface is treated as an implementation detail, tracking quality becomes fragile very quickly.

This is especially true for multi-brand platforms, federated teams, and shared component architectures. One team changes a form flow. Another renames a property. A third team launches a campaign with new identifiers. Everything can still appear to work in the browser while downstream reporting, audience building, or experiment analysis quietly breaks.

See where governance gaps are weakening CDP data qualityRun Health CheckThe solution is not more tagging activity. It is a clearer operating model. The data layer needs explicit ownership and a contract that different teams agree to maintain.

Why data layers break as platforms scale

In smaller environments, tracking can survive informal coordination. A few people understand the implementation, releases are limited, and downstream consumers are close to the source of change.

As platforms scale, those conditions disappear.

A multi-brand web platform typically introduces several forms of complexity at once:

- shared templates and components used by different product teams

- local brand requirements layered onto a common platform

- multiple consumers of the same event stream, including analytics, CDP, CRM, experimentation, and marketing teams

- delivery teams releasing at different cadences

- tag manager logic and direct instrumentation existing side by side

Under those conditions, the data layer stops being a convenience object and becomes a dependency surface.

That is where common failure modes emerge:

- Schema drift: the same event means slightly different things across brands or product areas

- Undocumented changes: payload fields are renamed, removed, or reformatted without notice

- Partial implementation: one team emits a new event but another team does not include the required identifiers

- Downstream breakage: dashboards, CDP mappings, activation logic, or experiment analysis silently stop working as expected

- Ambiguous ownership: everyone assumes someone else is validating tracking changes

The important point is that these failures are usually operational, not purely technical. Teams often know how to implement tracking. What they lack is a durable model for deciding who defines it, who approves change, and who verifies that the implementation still supports business use cases.

The ownership gap between analytics, engineering, and marketing teams

In many organizations, the data layer sits in an awkward space between disciplines.

Analytics teams often define reporting requirements but do not control frontend release workflows. Engineering teams implement the data layer but may not understand all downstream reporting or audience dependencies. Marketing or martech teams consume the data for activation but may have limited visibility into how data is generated or changed.

That creates predictable gaps.

If engineering owns the data layer alone, it can become optimized for what is easiest to ship rather than what is most durable for downstream consumers.

If analytics owns it alone, the specification can become detached from real application behavior, release constraints, and frontend architecture.

If martech owns it alone, the focus can skew toward campaign or activation needs without enough attention to product semantics and reusable event design.

In practice, data layer ownership should be shared, but not vague.

A workable model usually includes distinct responsibilities:

- Product owners or digital product managers define the business meaning of key user interactions and outcomes.

- Analytics leads define measurement requirements, event naming standards, and reporting implications.

- Frontend engineering or platform teams own technical implementation patterns, component-level consistency, and release integration.

- Martech or CDP teams define downstream ingestion requirements, identity implications, and activation dependencies.

- QA or release stakeholders verify that tracking changes are tested as part of deployment, not after complaints appear.

Shared ownership does not mean shared ambiguity. It means each function has a clear decision boundary.

For example, engineering should not unilaterally redefine what a form_submit event means. Analytics should not require properties that the application cannot reliably produce. Martech should not add campaign-related fields to core events without understanding how that affects reuse across brands. Product should not introduce a new conversion step without considering how it fits into the existing measurement model.

The best enterprise teams make these boundaries explicit and document them.

What a tracking contract should define

A contract model gives teams a stable reference point for the data layer. It is less about documentation for its own sake and more about reducing interpretation risk.

Pressure-test your CDP governance model

Assess ownership, change control, and validation practices that keep multi-brand tracking reliable.

- Audit ownership gaps

- Check event change controls

- Validate downstream readiness

A useful tracking contract should define at least four categories of information.

1. Event definitions

Each event should have a clear business meaning, not just a technical trigger.

For example:

product_viewshould specify when a product is considered viewed, such as on page render of a product detail page rather than when a component first mounts in any contextloginshould specify whether it represents login initiation, successful authentication, or session recognitionform_submitshould specify whether it means button click, client-side validation pass, or confirmed successful submission

Without these definitions, teams can emit technically similar events that represent different stages of a user journey.

2. Required and optional properties

The contract should identify:

- required fields

- optional fields

- accepted formats

- allowed values where relevant

- which properties are inherited from page context versus interaction context

For a product_view event, that might include product identifier, product name, category, price context, currency, and brand identifier. For a form_submit event, it might include form name, page context, submission status, and campaign or source attributes when available.

The point is not to create bloated payloads. It is to define the minimum viable structure that downstream systems can depend on.

3. Ownership and approval rules

A tracking contract should state:

- who can propose a new event

- who approves naming and semantics

- who validates technical feasibility

- who signs off on downstream impact

- who owns the source-of-truth documentation

This is where many teams fall short. They define events but not governance. As a result, the specification exists, but no one knows how change is supposed to happen.

4. Implementation and release expectations

The contract should also describe operational expectations, such as:

- whether events are emitted directly in application code, through a shared tracking library, or through a tag manager pattern

- when instrumentation must be included in delivery tickets

- what test evidence is required before release

- how production validation is performed after launch

This keeps the data layer connected to delivery practice instead of treating it as an afterthought.

Change management for events, properties, and downstream dependencies

The hardest part of data layer governance is not initial definition. It is change management.

Enterprise platforms change constantly. New features are introduced. Legacy journeys are retired. Brands request variations. Identity logic evolves. Campaign structures shift. The contract has to support change without making every update slow or bureaucratic.

A practical change model starts by recognizing that not all changes have the same impact.

Low-impact changes

These might include:

- adding a non-required property with no downstream dependency

- extending an allowed value list in a controlled way

- enabling an existing event on an additional brand template without changing its semantics

These changes can often move through lightweight review.

Medium-impact changes

These might include:

- introducing a new event for a new user journey

- adding a required property for a class of events

- changing the conditions under which an event fires

These usually need review from analytics and engineering, with visibility to martech or CDP stakeholders.

High-impact changes

These might include:

- renaming an event already used in reporting or activation

- changing property meaning or format for a field used downstream

- removing fields consumed by dashboards, audience rules, or experimentation analysis

- changing login, identity, or campaign attribution behavior

These changes need explicit impact assessment before release.

A simple but effective review workflow can include:

- Change request: define what is changing and why.

- Business meaning review: confirm the event still represents the intended user action or state.

- Technical review: confirm the implementation path is realistic and consistent with event tracking architecture.

- Dependency review: identify affected dashboards, CDP mappings, activation flows, and experiment setups.

- Release decision: approve, defer, or require mitigation.

- Post-release validation: confirm the live payload matches the approved contract.

This does not need to be heavy process. In well-run teams, it can be embedded into normal delivery practices through tickets, pull request templates, release checklists, and shared documentation.

The key is that the review exists before the change reaches production.

Validation workflows before release and after launch

Even a well-defined contract fails if validation is informal.

Many teams still rely on one of two weak patterns:

- someone checks the browser console and considers tracking complete

- analytics discovers a problem only after reports look wrong

Neither is enough for enterprise delivery.

Validation should happen at two levels: pre-release and post-launch.

Before release

Before code reaches production, teams should validate:

- that the right events fire for the intended user journeys

- that required properties are present

- that values conform to expected formats

- that identifiers are mapped correctly across page types or components

- that events do not duplicate unexpectedly in asynchronous or component-driven interfaces

For example, a product detail page should not emit multiple product_view events because a recommendation module re-renders. A login flow should distinguish between a login attempt and confirmed success. A form submission event should not fire on validation error if the business definition requires successful submit.

This kind of validation belongs in delivery workflows. It can be part of QA acceptance, implementation review, or automated checks where practical. The exact mechanism is less important than the discipline of treating tracking as a release criterion.

After launch

Production validation matters because real environments introduce conditions that lower environments often miss:

- consent behavior

- third-party script timing

- campaign parameters arriving in unexpected combinations

- brand-specific content or templates

- identity state differences across sessions and devices

Post-launch validation should confirm that production data matches the approved contract and that downstream systems are still receiving what they expect.

This does not require every stakeholder to inspect every payload. It requires a defined handoff and accountability model. Someone must verify the implementation, someone must verify the analytics view, and someone must confirm downstream ingestion or activation is not broken.

Governance patterns for multi-brand and multi-team environments

Governance becomes more important as the number of teams and brands increases, but that does not mean governance should become centralized in a way that slows delivery.

A strong model usually combines central standards with local implementation responsibility.

Central standards

A platform or governance group typically owns:

- canonical event naming conventions

- shared definitions for common interactions such as product views, logins, and form submissions

- required contextual fields such as brand, page type, locale, or campaign identifiers where applicable

- approval rules for changes that affect multiple brands or downstream platforms

- source-of-truth documentation and review workflows

This creates consistency where it matters most.

Local implementation responsibility

Brand teams or product squads typically own:

- implementing the approved events in their journeys

- mapping local business context to standard fields

- proposing additions where brand-specific requirements are valid

- validating that shared standards work correctly in their release context

This preserves delivery speed while keeping the model aligned.

The governance challenge is usually not whether standardization is desirable. It is deciding where variation is acceptable.

A good rule of thumb is:

- standardize semantics and core fields aggressively

- allow limited extension fields where real business differences exist

- review exceptions centrally when they could affect cross-brand comparability or downstream activation

That approach helps avoid two common mistakes.

The first is over-standardization, where teams force very different interactions into the same event and lose meaning.

The second is uncontrolled divergence, where every brand defines similar user actions differently and shared reporting becomes unreliable.

A practical operating model for enterprise teams

For most enterprise organizations, a workable model looks something like this:

- establish a single source of truth for the data layer contract

- assign named owners across product, analytics, engineering, and martech

- define a lightweight review path for event and payload changes

- classify changes by business and downstream impact

- include tracking validation in release criteria

- confirm production behavior after launch

- regularly review common events across brands to detect drift

This model is intentionally tool-agnostic. It can support platforms that use a tag manager, direct frontend instrumentation, or a mix of both. The governance principle is the same either way: the data layer is a shared contract, not an informal byproduct of implementation.

That distinction matters because analytics quality is rarely lost in one dramatic failure. More often, it erodes through small local changes that seem harmless in isolation. A renamed property here, a missing identifier there, a quietly redefined event meaning somewhere else. Over time, the platform still emits data, but confidence in that data declines.

Once that happens, dashboards become harder to trust, CDP audiences become harder to maintain, experimentation results become harder to interpret, and teams spend more time diagnosing tracking issues than using data to make decisions.

Treating data layer ownership as a contract discipline helps prevent that erosion. It gives teams a way to move quickly without turning every release into a hidden risk for analytics, activation, and measurement. In practice, that often means pairing governance with disciplined data layer implementation and release validation patterns proven on large multi-brand platforms such as Organogenesis.

CDP governance

Find the governance issues behind tracking drift

Use the Health Check to evaluate contract discipline, release validation, and cross-team accountability before data quality slips further.

For enterprise and multi-brand platforms, that is the real goal. Not more events. Better governed ones.

Tags: data layer ownership, CDP, web tracking governance, enterprise event tracking, analytics contract model, multi-brand tracking architecture