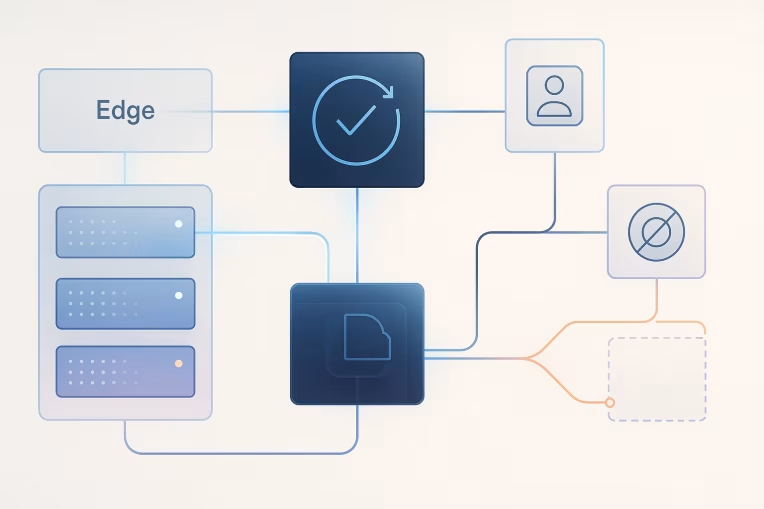

Personalization at the edge can look straightforward in architecture diagrams.

A request arrives. Identity is resolved. The CDP or decisioning layer returns the right audience or next-best experience. The edge assembles the page variant. The user gets a timely, relevant experience.

In production, the sequence is rarely that clean.

Real-time data may arrive late. Identity confidence may be partial. Consent state may be unavailable at the exact moment the page needs to render. A cache strategy designed for performance may conflict with a personalization strategy designed for precision. And when those conditions collide, the frontend still has to decide what to show.

That is where fallback architecture matters.

A mature personalization implementation is not defined only by how well it performs when every dependency responds on time. It is defined by how safely it degrades when one or more dependencies do not. For enterprise teams, that means treating fallback behavior as a first-class architectural concern, not as a temporary exception buried in frontend logic.

Why personalization architectures fail at the last mile

Many personalization programs are planned from the inside out.

Teams start with the CDP, audience design, event model, profile stitching, or decisioning logic. Those are all important. But the user does not experience the architecture from the inside out. The user experiences it at the last mile: the page request, the render path, and the visible content.

That last mile tends to fail for a few common reasons:

- The rendering layer waits too long for customer context that is not guaranteed to arrive.

- Different systems use different assumptions about identity state.

- Cache rules were designed before personalization requirements were fully defined.

- Consent enforcement is treated as a data concern instead of a rendering concern.

- Teams optimize for maximum targeting precision rather than acceptable response time.

- No one defines what should happen when a decision cannot be made in time.

This is especially common in headless and composable environments. Those architectures create flexibility, but they also expose the application to more runtime decisions. If the system depends on multiple distributed services to construct a single personalized response, then latency and resilience become part of the content strategy.

The key shift is simple: real-time personalization is optional at request time unless the business has explicitly decided it is worth the latency, complexity, and operational risk. In many journeys, a well-designed non-personalized or lightly personalized default is the better choice.

The latency triangle: identity, decisioning, and rendering

Most edge personalization problems sit inside a three-way constraint:

- Identity: Can the system confidently know who this request belongs to?

- Decisioning: Can the system determine the right experience from available data?

- Rendering: Can the page respond within an acceptable performance budget?

These three concerns influence each other.

If identity is uncertain, decisioning is weaker. If decisioning takes too long, rendering suffers. If rendering must remain fast, the system may need to use a simpler decision based on less data.

This is why personalization cannot be evaluated as a standalone capability. It has to be evaluated as a runtime tradeoff.

A useful way to think about it is this:

- High identity confidence + fast decisioning can support fine-grained personalization.

- Low identity confidence + fast rendering requirement usually calls for a safer, broader fallback.

- Slow upstream dependencies should not be allowed to hold the render path hostage.

Enterprise teams often benefit from separating two questions that are frequently blended together:

- What is the best possible personalized experience?

- What is the best experience we can reliably deliver within this request budget?

Those are not always the same answer.

Fallback tiers: anonymous default, segment-level, session-level, user-level

A strong fallback architecture is usually tiered rather than binary.

Too many implementations think in terms of success or failure: either the system gets a user-level decision, or it shows a generic default. That creates unnecessary volatility. A better model defines multiple tiers of decision quality and allows the system to step down gracefully.

A common tier model looks like this:

1. Anonymous default

This is the fully safe experience.

No user-specific assumptions are required. It works when identity is unavailable, consent is absent, or decisioning has timed out. The anonymous default should still be a deliberate experience, not an empty personalization placeholder.

Examples include:

- a standard homepage hero

- globally relevant navigation priorities

- region-agnostic promotional content

- editorially curated recommendations

This tier is the baseline every team should be comfortable serving at full traffic volume.

2. Segment-level fallback

This tier uses broad, low-risk signals rather than a specific user profile.

Signals can include:

- geography at an acceptable level of granularity

- device class

- referral source category

- campaign context carried in the URL or edge-readable headers

- broad audience membership already safely available at the edge

Segment-level fallback can improve relevance without requiring precise identity resolution on every request. It is often the most practical middle ground for enterprise platforms because it limits render-time dependency depth.

3. Session-level fallback

This tier relies on context established during the current visit rather than a long-lived cross-session profile.

Examples include:

- a recently viewed product category

- a known content path in the current journey

- an active locale or market selection

- an onsite preference chosen during the session

Session-level signals can be valuable because they are often available faster and with less governance risk than user-level profile activation. They also avoid overstating confidence when the system cannot firmly link the current request to a persistent identity.

4. User-level personalization

This is the most precise tier and usually the most operationally expensive.

It may depend on:

- identity resolution across devices or channels

- up-to-date profile attributes

- audience qualification

- decisioning policies or offer arbitration

- consent checks tied to a named or pseudonymous profile

User-level personalization can be appropriate in high-value journeys, but it should be treated as the top tier, not the default assumption. If it is unavailable within the allowed budget, the system should drop to the next acceptable tier instead of stalling the response.

The important design principle is that each tier should be:

- understandable to business stakeholders

- technically implementable with known dependencies

- compliant with consent and privacy rules

- measurable in production

Timeout budgets and when to stop waiting for real-time data

One of the most important personalization decisions is not which variant to show. It is when to stop waiting.

If a request path has no defined timeout budget, the platform will usually inherit the slowest acceptable behavior of its dependencies. That is rarely intentional.

Timeout design should start with the page, not the personalization system.

Ask:

- What is the maximum acceptable delay this page type can tolerate?

- Which elements are allowed to personalize synchronously?

- Which elements can render with fallback and hydrate later, if appropriate?

- Which decisions are too expensive to make during the request?

A practical approach is to set decision windows by page zone, not only by page.

For example:

- A page shell, navigation, and above-the-fold content may require an immediate deterministic fallback.

- A recommendation rail lower on the page may have a slightly larger wait budget or load after initial render.

- An account dashboard may justify a stronger dependency on user-level data than a public campaign landing page.

The exact numbers depend on the platform and business context, but the architectural pattern is consistent: define a point at which the request path stops attempting real-time enrichment and renders the best approved fallback.

Without that boundary, teams end up with hidden failure modes such as:

- blank slots waiting for personalization data

- layout shifts when late decisions overwrite already-rendered content

- inconsistent cache behavior between slow and fast responses

- intermittent rendering bugs that only appear under dependency degradation

A timeout budget is not an admission of weakness. It is a governance mechanism that prevents the rendering layer from becoming a hostage to upstream variability.

Cache strategy, edge execution, and safe degradation paths

Personalization and caching have an uneasy relationship because one aims to vary output while the other aims to reuse it.

This is where many edge rendering strategies become unstable. Teams enable personalization in theory, then discover in practice that their cache hit rates collapse, origin traffic increases, or variant logic becomes hard to reason about.

The solution is usually not to abandon caching. It is to align personalization granularity with cache strategy.

A few principles help:

Personalize only where value justifies cache variation

Not every component should be personalized at request time.

If a small improvement in relevance causes a large increase in cache fragmentation, the economics may not work. Broadly shared experiences often deliver better overall outcomes than hyper-fragmented pages that are slower and more operationally fragile.

Separate cacheable structure from variable decision zones

In headless and modern frontend architectures, it is often useful to keep the main page structure highly cacheable while restricting runtime variation to a smaller set of well-defined regions.

That can mean:

- rendering a stable shell at the edge

- limiting personalization to a few components

- using edge-readable signals for coarse variation

- deferring low-priority user-specific elements until after primary content is stable

This reduces the blast radius when real-time data is late.

Make fallback outputs cache-safe

If a decision times out, the fallback response still has to behave predictably with cache rules.

For example, avoid situations where:

- a response generated under uncertain identity is cached too broadly

- a user-level variant leaks into a segment-level cache key

- a generic fallback is stored in a way that suppresses future legitimate personalization

Safe degradation requires explicit cache-key design, variant scoping, and response handling. A fallback is not safe if it accidentally contaminates downstream caching behavior.

Design for deterministic degradation

The frontend should know what to do when each decision source fails or exceeds budget.

Good degraded-state examples include:

- showing the standard hero instead of a profile-targeted one

- using market-level content when audience membership cannot be confirmed in time

- rendering editorial recommendations when the recommendation service does not respond quickly enough

- suppressing personalized messaging altogether when consent state is unavailable

These degraded outcomes should be documented and approved. They should not appear only because of default null handling in code.

Consent and identity uncertainty in fallback decisions

A fallback architecture is not only a performance tool. It is also a governance tool.

Two conditions frequently get underestimated in personalization design: uncertain identity and uncertain consent.

They are related, but not identical.

A system may have some evidence about a visitor without having enough confidence to treat that person as a known user for rendering purposes. Similarly, a system may know who the visitor likely is but still lack the confirmed consent state required for certain types of activation.

That means fallback logic should consider at least three dimensions:

- identity confidence: how sure are we that this request maps to the intended profile?

- consent status: what uses of data are permitted right now?

- decision criticality: how important is this personalization to the page outcome?

When these dimensions are modeled explicitly, better decisions follow.

Examples:

- If identity confidence is low, prefer session-level or anonymous fallback rather than forcing user-level targeting.

- If consent cannot be validated in time, default to a non-personalized experience rather than assuming permission.

- If a decision uses sensitive profile logic, require stronger identity and policy validation than for broad content ordering.

This is especially important in enterprise environments where multiple systems may produce slightly different views of identity state. The rendering layer should not invent certainty that the data layer cannot actually guarantee.

A useful rule is conservative escalation: the system can move upward into more specific personalization only when the required identity and consent conditions are positively satisfied. It should not move upward by assumption.

Operational ownership: who defines acceptable degradation

Fallback design often fails because it has no clear owner.

Engineering may implement timeout behavior. Martech may define audiences. Product may care about conversion. Legal or privacy teams may define consent requirements. Operations may monitor incidents. But unless someone defines acceptable degradation across those groups, fallback behavior remains inconsistent.

Enterprise teams usually need shared ownership across four decisions:

1. What can degrade?

Not every personalized element deserves the same treatment.

Teams should classify components by business criticality and determine whether they can:

- fall back immediately

- wait briefly for a decision

- render later after the initial response

- be suppressed entirely if conditions are not met

2. What is the approved fallback for each tier?

This should not be left to ad hoc implementation.

For every important personalized zone, define the ordered fallback path. For example:

- user-level offer

- segment-level message

- market default message

- no message

That sequence should be explicit in design and requirements, not only in code.

3. Who owns runtime thresholds?

Timeouts, retry rules, and degradation thresholds are operational policy decisions as much as technical ones.

If the business insists on user-level precision, it must also accept the performance and resilience implications. If the page requires strict performance targets, that may place hard limits on how much real-time dependency the render path can tolerate.

4. How is degraded behavior observed?

Teams should be able to answer questions such as:

- How often did the experience fall back to a lower tier?

- Which dependency caused the downgrade?

- Did fallback frequency increase after a release or infrastructure change?

- Are some page types consistently over budget for real-time decisioning?

Observability is what turns fallback from a hidden exception into an operating model. Without it, the organization cannot tell whether personalization is functioning as designed or quietly failing at scale.

Implementation checklist for enterprise teams

For teams building or refining edge personalization fallback architecture, the following checklist can help turn the concept into delivery discipline.

Define the personalization hierarchy

Document the allowed decision tiers for each important page or component:

- anonymous default

- segment-level

- session-level

- user-level

Do not assume every page needs all four.

Assign render-time budgets

Set clear wait limits for:

- identity lookup

- decisioning requests

- edge assembly

- optional downstream components

Budget from the user experience backward, not from the dependency outward.

Map dependency failure modes

For each dependency in the render path, define:

- timeout behavior

- fallback target tier

- whether retries are allowed

- whether the page renders without that dependency

Align cache design to personalization scope

Review:

- cache keys

- variation rules

- shared versus user-specific outputs

- invalidation implications

- safe handling of fallback responses

If the cache strategy cannot explain the personalization model, the implementation is probably too opaque.

Model consent and identity explicitly

Avoid binary assumptions such as known/unknown.

Capture and use states such as:

- anonymous

- session-known

- partially resolved identity

- high-confidence user match

- consent unavailable

- consent denied

- consent granted for specific activation use cases

These states make fallback decisions more reliable and more governable.

Pre-approve degraded experiences

Create business-approved fallback content and rules before launch.

That includes practical examples such as:

- standard content blocks to replace targeted modules

- segment-safe messaging that can be served broadly

- rules for suppressing content when policy checks fail

- UX behavior for empty or delayed recommendation zones

Instrument the tiers

Track which decision tier was actually used at runtime.

This is critical. Many teams measure only whether personalization code executed, not whether the highest intended tier was achieved. If the platform falls back to anonymous or segment-level decisions most of the time, that should be visible in reporting and operations.

Test degraded paths deliberately

Do not test only the ideal flow.

Simulate:

- slow identity services

- absent audience responses

- missing consent state

- conflicting cache conditions

- partial session context

If the degraded path has not been tested, it is not really designed.

Final perspective

The best personalization architectures are not the ones that chase maximum specificity on every request. They are the ones that make disciplined choices about when personalization is worth the runtime cost and when a simpler experience is the better operational decision.

For enterprise digital platforms, that usually means designing fallback tiers, timeout budgets, and cache-aware degradation paths before expanding personalization depth. It also means treating identity confidence and consent enforcement as part of the rendering contract, not as assumptions borrowed from upstream systems.

When real-time data arrives late, the question should not be whether the page can somehow continue waiting. The question should be whether the platform already knows the next safe, fast, and approved experience to serve.

If that answer is yes, the personalization system is becoming mature. If the answer is no, the architecture is still optimized for diagrams rather than production reality.

Tags: CDP, edge personalization fallback architecture, personalization architecture, edge rendering, customer data activation, enterprise web platforms