[01]

[01]Discovery

We review source platforms, content volumes, schema patterns, and migration objectives. This establishes scope, identifies structural risks, and clarifies where AI-assisted processing can be applied safely.

AI-assisted content migration combines structured migration engineering with language-model support for classification, transformation, normalization, and editorial acceleration. It is most useful in large CMS and DXP programs where content volumes, inconsistent legacy structures, and fragmented governance make manual migration slow, expensive, and difficult to validate.

Organizations typically need this capability when moving between platforms, consolidating multiple sites, introducing structured content models, or preparing content for headless delivery. In these environments, migration is not only a data transfer exercise. It requires decisions about schema alignment, field mapping, taxonomy normalization, component compatibility, metadata quality, and workflow controls.

A well-engineered approach uses AI selectively inside a governed migration pipeline. Language models can assist with content interpretation and transformation, but they must operate within defined rules, validation checkpoints, and platform constraints. This supports scalable migration architecture by reducing repetitive manual effort while preserving traceability, content integrity, and operational confidence across enterprise delivery programs.

As content platforms grow over time, organizations accumulate large volumes of pages, documents, media references, taxonomies, and metadata shaped by different teams, workflows, and publishing standards. Legacy CMS estates often contain duplicated structures, inconsistent field usage, weak taxonomy discipline, and editorial patterns that were never designed for reuse across channels or modern delivery architectures.

When migration begins, these inconsistencies become architectural problems rather than simple content issues. Teams must interpret ambiguous source material, reconcile mismatched schemas, and decide how unstructured or semi-structured content should fit into a target model. Manual review does not scale well, while fully automated migration without contextual interpretation introduces errors, broken relationships, and low confidence in the output. Engineering teams then spend significant time building exception handling, validating transformed records, and resolving edge cases that were not visible during early planning.

The operational impact is substantial. Delivery timelines extend because migration logic becomes more complex than expected. Content operations teams are pulled into repetitive cleanup work. Platform launches are delayed by unresolved quality issues, and target architectures inherit avoidable inconsistency. Without a governed migration model, organizations risk moving legacy disorder into a new platform rather than improving the structure, maintainability, and long-term usability of their content estate.

We assess source systems, content types, field patterns, taxonomies, and editorial workflows to understand migration complexity. This stage identifies structural inconsistencies, content quality issues, and the boundaries where AI-assisted transformation is appropriate.

Source structures are mapped to the target content model, including fields, relationships, metadata, and component patterns. We define where deterministic rules are sufficient and where language-model interpretation is needed to support restructuring.

We design migration pipelines that combine ETL processes, prompt logic, transformation rules, and validation checkpoints. The workflow is structured to preserve traceability, support retries, and isolate exceptions for review.

Content normalization, enrichment, classification, and rewriting logic are implemented against defined schemas and migration objectives. AI-assisted steps are constrained by templates, rules, and output formats suitable for downstream ingestion.

Automated checks are introduced for schema compliance, field completeness, taxonomy alignment, link integrity, and transformation confidence. This creates measurable controls around migration quality rather than relying on spot checks alone.

A representative subset of content is migrated first to test mappings, prompts, exception handling, and target platform behavior. Findings from the pilot are used to refine rules before broader execution begins.

Migration runs are executed in controlled batches with logging, monitoring, and rollback planning. Teams can track throughput, error classes, and review queues while maintaining operational oversight across the program.

After initial migration waves, we refine prompts, rules, and validation thresholds based on observed outcomes. This supports repeatable execution across remaining content sets and improves maintainability for future migration activity.

This service combines structured migration architecture with selective AI automation inside governed enterprise workflows. The emphasis is on content model alignment, transformation control, validation coverage, and operational traceability. Rather than treating AI as a replacement for migration engineering, it is used as a bounded capability within pipelines that support scale, repeatability, and platform integrity.

Delivery is organized as a controlled engineering program that combines content analysis, migration architecture, AI-assisted transformation, and validation operations. The model is designed for enterprise teams that need measurable progress, clear governance, and repeatable execution across large content estates.

[01]

[01]We review source platforms, content volumes, schema patterns, and migration objectives. This establishes scope, identifies structural risks, and clarifies where AI-assisted processing can be applied safely.

[02]

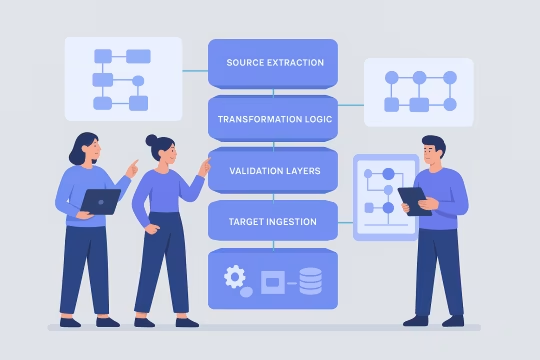

[02]Migration architecture is defined across source extraction, transformation logic, validation layers, and target ingestion. We document data flows, control points, exception paths, and operational dependencies before implementation begins.

[03]

[03]We build mapping logic, ETL workflows, prompt templates, transformation services, and ingestion connectors. Engineering focuses on repeatability, observability, and compatibility with the target platform model.

[04]

[04]Migration outputs are tested for schema compliance, field accuracy, taxonomy alignment, and content integrity. Pilot runs and sampled reviews are used to tune prompts, rules, and exception thresholds.

[05]

[05]Migration is executed in planned waves with monitoring, rollback considerations, and operational checkpoints. Teams can track throughput, error classes, and review queues during each release stage.

[06]

[06]Controls are introduced for prompt versioning, validation criteria, audit logging, and approval workflows. This supports accountability across engineering, content operations, and platform leadership.

[07]

[07]As migration progresses, rules and prompts are refined based on observed edge cases and validation outcomes. This improves quality over time and supports future migration or restructuring initiatives.

The primary value of this service is operational and architectural: it reduces migration friction while improving the quality and maintainability of content entering the target platform. Enterprise teams gain a more controlled path to modernization without relying entirely on manual remediation.

AI-assisted transformation reduces the amount of repetitive manual interpretation required during migration. Teams can process larger content volumes in structured batches while keeping review effort focused on exceptions and high-risk cases.

Content operations and engineering teams spend less time on repetitive cleanup, field rewriting, and metadata normalization. Effort shifts toward rule definition, validation, and quality control rather than record-by-record intervention.

Structured mapping and controlled transformation help standardize fields, taxonomies, and metadata across migrated content. This produces a more coherent target estate and reduces inherited inconsistency from legacy platforms.

Validation frameworks, pilot migrations, and exception handling provide earlier visibility into content quality issues. This lowers the likelihood of late-stage surprises that delay launches or compromise target platform stability.

Migration outputs are aligned to the target architecture rather than copied directly from legacy structures. This improves reuse, supports modern delivery models, and reduces the need for post-launch content restructuring.

Traceable workflows, logged transformations, and review controls make migration decisions easier to audit and explain. This is particularly important in large organizations where multiple teams share responsibility for content quality.

Once migration logic, prompts, and validation controls are established, the model can be reused across sites, brands, or business units. This supports broader platform modernization programs without restarting the migration approach each time.

This service often sits alongside migration, content architecture, and platform modernization work across CMS and DXP programs.

Structured content transformation for enterprise platforms

Structured remediation for large content estates

Governed AI workflows for metadata quality

Structured metadata and classification engineering

Governed automation across content and operations

Enterprise content migration with API-first content delivery

Structured schemas for an API-first content strategy

Composable DXP content architecture and API-first platform design

Common questions about architecture, operations, integration, governance, risk, and delivery for AI-assisted content migration programs.

AI content migration should be treated as a bounded capability within a broader migration architecture, not as an isolated automation layer. In enterprise environments, migration usually spans source extraction, content analysis, schema mapping, transformation logic, validation, target ingestion, and operational review. AI is most useful in the transformation and interpretation stages, especially where legacy content is inconsistent, weakly structured, or difficult to map through deterministic rules alone. From an architectural perspective, the important decision is where AI is allowed to operate and what constraints govern it. Outputs should be shaped by target content models, field definitions, taxonomy rules, and validation requirements. Prompt logic, transformation templates, and confidence thresholds need to be part of the migration design rather than added informally during execution. This approach keeps the platform architecture stable. The target CMS or DXP remains the source of structural truth, while AI acts as a controlled processing layer that helps interpret and normalize content before ingestion. That separation is important for maintainability, auditability, and long-term platform operations.

Deterministic rules should be the default whenever source content is predictable, consistently structured, and clearly mapped to the target model. Field transfers, known transformations, taxonomy remaps, and standard data normalization are usually better handled through explicit logic because the behavior is transparent and repeatable. Language models become useful when the migration problem requires interpretation rather than simple conversion. Typical examples include extracting meaning from long-form body content, classifying loosely governed legacy pages, generating structured summaries from unstructured text, normalizing inconsistent metadata, or identifying likely target components from mixed editorial patterns. In these cases, deterministic rules alone may be too brittle or too expensive to maintain. The practical model is hybrid. Use deterministic processing for stable, high-confidence transformations and reserve AI for ambiguous or labor-intensive tasks where contextual interpretation adds value. Even then, AI outputs should be constrained by schemas, validation checks, and review thresholds. This prevents the migration pipeline from becoming unpredictable and ensures that language-model usage remains aligned with the target architecture.

Scaled operation depends on treating migration as a managed pipeline rather than a one-time script. Teams typically organize work into batches, with each batch moving through extraction, transformation, validation, exception review, and ingestion. Operational visibility is important, so logs, throughput metrics, error categories, and validation results should be available throughout the run. AI-assisted steps need additional operational controls. Prompt versions, model settings, confidence thresholds, and fallback paths should be documented and stable for each migration wave. If these variables change without governance, output consistency becomes difficult to manage across large content sets. Review queues are also necessary for low-confidence transformations, unsupported source patterns, or records that fail validation. In practice, content operations, engineering, and platform teams work together. Engineering owns the pipeline and validation framework, while content specialists help assess transformation quality and edge cases. This shared operating model allows organizations to scale migration volume without losing oversight of quality, traceability, or platform compatibility.

The most useful metrics combine throughput, quality, and exception visibility. Throughput metrics include records processed, batch completion rates, ingestion success rates, and average handling time per content type. These show whether the migration is progressing at the pace required by the delivery plan. Quality metrics are equally important. Teams usually track schema validation pass rates, required field completion, taxonomy alignment, broken references, confidence scores for AI-assisted transformations, and the percentage of records requiring manual review. These indicators help determine whether the pipeline is producing target-ready content or simply moving problems downstream. Exception metrics often provide the clearest operational insight. Repeated failure patterns, prompt-related errors, unsupported source structures, and content types with high review volumes reveal where the migration design needs refinement. Over time, these metrics support tuning decisions and help teams improve both automation coverage and validation accuracy. A migration program is easier to govern when quality and exception data are visible alongside delivery progress.

Yes, but the integration model depends on the maturity of both the source and target platforms. In most cases, AI-assisted migration sits between extraction and ingestion. Source content is pulled from the existing CMS, repository, or database, transformed through a governed pipeline, validated against the target schema, and then pushed into the destination platform through APIs, import services, or staged data loaders. The key integration requirement is structural clarity. The target platform needs a defined content model, field constraints, taxonomy rules, and ingestion contract. Without that, AI-generated or AI-transformed outputs have no reliable destination shape. On the source side, teams need enough access to content, metadata, relationships, and media references to preserve meaning during migration. Integration also extends to operational systems. Review workflows, logging, validation reports, and exception queues may need to connect with existing delivery tooling or content operations processes. The goal is not only to move content between platforms, but to make the migration process observable and manageable within the organization’s current engineering and publishing environment.

Headless and structured content platforms increase the importance of content modeling during migration. Legacy systems often store meaning inside page layouts, rich text blocks, or inconsistent editorial conventions, while headless platforms require content to be decomposed into reusable fields, components, relationships, and metadata. That gap is where AI-assisted transformation can be useful. Language models can help interpret unstructured source material and reorganize it into target-ready structures, but only when the target schema is explicit. For example, body content may need to be split into summaries, sections, callouts, references, or component-compatible fragments. Taxonomies may also need normalization so that content can be reused consistently across channels. The migration still depends on engineering discipline. APIs, content models, validation rules, and exception handling must be in place before AI is introduced. When implemented correctly, this approach helps organizations move from page-centric legacy publishing toward structured, reusable content operations without relying entirely on manual decomposition of every record.

Governance should cover both migration engineering and AI-specific behavior. At the engineering level, teams need approved source-to-target mappings, documented transformation rules, validation criteria, exception workflows, and clear ownership across engineering, content, and platform stakeholders. These controls ensure that migration decisions are consistent and reviewable. For AI-assisted steps, governance should include prompt versioning, model selection, output constraints, confidence thresholds, and rules for when human review is required. It is important to know which content types can be transformed automatically, which require sampling, and which should always be reviewed manually. Without these boundaries, organizations may struggle to explain how content was changed or why certain outputs were accepted. Auditability is also essential. Teams should be able to trace migrated records back to source content, transformation logic, validation outcomes, and ingestion status. This is particularly important in regulated environments or large organizations where migration decisions may be reviewed after launch. Good governance does not slow migration down; it makes scaled execution more reliable and easier to manage.

Consistency across waves depends on standardizing the migration framework before large-scale execution begins. That usually means establishing a shared content model strategy, reusable mapping patterns, common prompt templates, validation rules, and a documented exception taxonomy. Once these foundations are in place, teams can apply the same operating model across sites, brands, or business units with controlled local variation. Prompt and rule management are especially important. If each wave uses different transformation logic without coordination, output quality and structural consistency will drift. Versioning, change approval, and regression testing help prevent that. Teams should also compare validation metrics across waves so that quality thresholds remain stable rather than being interpreted differently by each delivery group. A federated governance model often works well in enterprise settings. Central teams define standards, controls, and reusable assets, while local teams handle content-specific review and edge cases. This balances consistency with practical delivery needs and supports long-term maintainability beyond the initial migration program.

The main risks are structural misalignment, low-quality transformations, insufficient validation, and overconfidence in automation. If the target content model is not clearly defined, AI may produce outputs that appear useful but do not fit the platform architecture. This creates rework later and can undermine the maintainability of the new platform. Another major risk is inconsistent behavior across content types or migration waves. Language models can handle ambiguity well, but they can also produce variable results if prompts, source patterns, or constraints are not stable. Without strong validation and exception handling, these issues may only become visible after ingestion or editorial review. There are also operational risks. Teams may underestimate the amount of governance, review, and tuning required to run AI-assisted migration safely at scale. The mitigation strategy is not to avoid AI entirely, but to use it selectively inside a controlled pipeline. Clear schemas, bounded prompts, measurable validation, and pilot migrations reduce risk significantly and make the migration process more predictable.

Validation should happen at multiple levels. First, structural validation checks whether the migrated record conforms to the target schema, including field types, required values, relationships, and taxonomy constraints. This ensures the content is technically ingestible and compatible with the destination platform. Second, content-level validation assesses whether the transformed output preserves meaning and supports the intended editorial or delivery use case. This may include sampling, rule-based checks, metadata verification, link integrity testing, and comparison against source records. For AI-assisted transformations, confidence thresholds and exception routing are useful for identifying records that need review. Third, operational validation confirms that migrated content behaves correctly in the target environment. That includes rendering, API delivery, search indexing, component compatibility, and workflow readiness. Accuracy is not only about textual fidelity; it is also about whether the content functions properly within the new platform model. A strong validation framework combines automated checks with targeted human review so quality can be measured at scale without relying entirely on manual inspection.

A typical engagement includes discovery, source analysis, target model review, migration architecture design, transformation workflow implementation, validation setup, pilot migration, and scaled execution support. The exact scope depends on whether the organization already has a defined target schema and whether the migration involves one platform or a broader modernization program. In early stages, the focus is usually on understanding source complexity and identifying where deterministic mapping is sufficient versus where AI-assisted interpretation is justified. From there, teams define transformation rules, prompt patterns, validation criteria, and exception handling processes. Pilot migrations are then used to test assumptions before larger content volumes are processed. Some engagements are limited to migration architecture and workflow design, while others include hands-on engineering through execution and tuning. In enterprise settings, collaboration often extends to governance, reporting, and coordination with content operations teams. The engagement model is flexible, but the work is generally structured around measurable migration quality and operational readiness rather than ad hoc automation experiments.

Collaboration usually begins with a focused assessment of the current content estate, migration goals, and target platform constraints. This is not a generic discovery workshop. It is a working review of source systems, content types, schema quality, editorial patterns, migration timelines, and the operational risks that could affect delivery. The aim is to determine whether AI-assisted migration is appropriate, where it adds value, and what controls are required. From that assessment, teams typically define an initial migration slice or pilot scope. This may involve a limited set of content types, a representative source repository, or a specific business unit. The pilot is used to validate mapping assumptions, test transformation logic, measure exception rates, and establish the governance model before broader rollout. This starting phase also clarifies roles. Platform teams, content operations, and engineering stakeholders align on ownership for validation, review, and decision-making. Beginning this way creates a practical foundation for the engagement and avoids introducing AI into migration work before the architectural and operational conditions are properly understood.

These case studies show how complex CMS and DXP migrations were executed with structured mapping, consolidation planning, content cleanup, and governance controls. They are especially relevant to AI Content Migration because they demonstrate the real delivery conditions where migration pipelines, validation logic, and target-platform readiness matter most. Together, they provide concrete proof across multi-site consolidation, legacy cleanup, and enterprise-scale modernization.

These articles cover the planning, modeling, and governance decisions that make AI-assisted content migration reliable in enterprise CMS and DXP programs. They add practical context on auditing content structures, choosing migration approaches, and controlling schema change so transformation pipelines stay traceable and safe.

Let’s review your content estate, target platform model, and migration constraints to define a governed AI-assisted delivery approach.