[01]

[01]Discovery

We review platform context, content volumes, governance constraints, and operational goals. This phase clarifies where cleanup will create the most value and what risks need to be managed from the start.

AI-assisted content cleanup is a structured engineering capability used to assess, normalize, and remediate large volumes of content across CMS and DXP environments. It combines automated analysis with governance rules, taxonomy logic, metadata workflows, and quality controls to reduce inconsistency across pages, documents, components, and structured content models.

Organizations typically need this capability when content estates have grown through multiple teams, migrations, campaigns, or platform changes. Over time, naming conventions drift, metadata becomes incomplete, duplicate content accumulates, and taxonomy usage becomes inconsistent. These issues affect search, personalization, reporting, accessibility, migration planning, and day-to-day editorial operations.

A disciplined cleanup process helps restore structural integrity to the content layer. It creates a more reliable foundation for platform evolution, search optimization, analytics, workflow automation, and future AI use cases. Rather than treating cleanup as a one-off editorial exercise, the work is approached as a governed platform activity with clear rules, review processes, and measurable quality improvements.

As enterprise content estates grow, they often accumulate inconsistent metadata, duplicated entries, outdated pages, fragmented taxonomy usage, and uneven content structure. Different teams publish through different workflows, legacy migrations introduce formatting and field-level anomalies, and governance rules are applied inconsistently over time. The result is a content layer that becomes harder to search, classify, reuse, and trust.

These issues create architectural friction across the platform. Search relevance declines because metadata is incomplete or misapplied. Personalization and analytics become less reliable because content types, tags, and attributes are not normalized. Editorial teams spend time correcting avoidable issues manually, while platform teams struggle to define dependable rules for migration, automation, and downstream integrations. Even when the CMS itself is stable, the content model in practice becomes operationally fragmented.

The consequences extend beyond content quality. Delivery slows because teams cannot confidently identify what should be retained, merged, archived, or restructured. Governance becomes reactive rather than systematic. Migration programs inherit avoidable complexity, and AI initiatives are weakened by poor source material. Without a structured remediation approach, content debt compounds over time and limits the effectiveness of the broader digital platform.

We assess the content estate, publishing workflows, source systems, and governance constraints. This establishes the scale of inconsistency, identifies high-risk content domains, and defines the remediation scope.

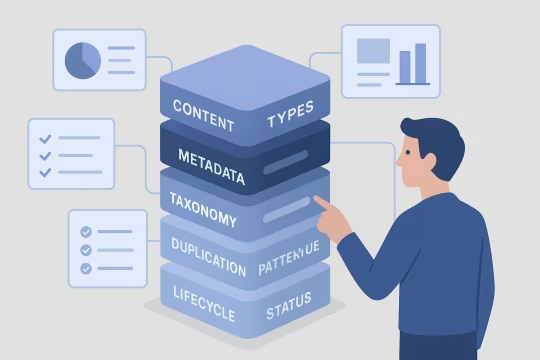

We define audit criteria for structure, metadata, taxonomy, duplication, quality, and lifecycle status. Rules are aligned to platform objectives such as migration readiness, search quality, or governance improvement.

Automated and AI-assisted analysis is used to classify patterns across large content sets. This includes field completeness, taxonomy drift, duplication indicators, formatting anomalies, and content quality signals.

We translate findings into explicit cleanup logic, including normalization rules, metadata mappings, taxonomy decisions, and exception handling. The goal is to make remediation repeatable and reviewable.

Cleanup activities are integrated into CMS, DXP, or operational workflows where possible. This may include batch processing, editorial review queues, metadata enrichment steps, and QA checkpoints.

Outputs are validated through sampling, rule verification, and stakeholder review. This ensures automated changes remain aligned with governance requirements and do not introduce structural regressions.

We sequence remediation across environments, teams, and content domains to reduce operational disruption. Rollout planning includes rollback considerations, ownership boundaries, and reporting expectations.

After remediation, we define controls that help prevent content debt from returning. This includes standards, review mechanisms, taxonomy stewardship, and quality monitoring processes.

This service focuses on the technical mechanisms required to restore consistency across enterprise content estates. It combines analysis, rule definition, workflow integration, and quality controls so remediation can be executed at scale without losing governance oversight. The emphasis is on structured cleanup that improves maintainability, supports platform evolution, and creates more dependable content operations.

Delivery is structured as a governed engineering engagement that combines analysis, remediation design, controlled execution, and operational enablement. The model is designed for large content estates where quality, traceability, and cross-team coordination matter as much as automation speed.

[01]

[01]We review platform context, content volumes, governance constraints, and operational goals. This phase clarifies where cleanup will create the most value and what risks need to be managed from the start.

[02]

[02]We perform structured analysis across content types, metadata, taxonomy usage, duplication patterns, and lifecycle status. Findings are organized into actionable categories that support prioritization and remediation planning.

[03]

[03]We define the remediation model, including rules, mappings, workflow touchpoints, review controls, and reporting logic. This creates a repeatable framework for cleanup rather than a one-time manual exercise.

[04]

[04]We configure and execute AI-assisted and rule-based cleanup activities across the agreed scope. Work may include metadata correction, taxonomy normalization, duplicate identification, and structured content adjustments.

[05]

[05]We verify outputs through QA sampling, stakeholder review, and rule testing. Validation ensures that cleanup actions improve consistency without weakening governance or introducing unintended structural changes.

[06]

[06]We sequence rollout across environments, teams, or content domains based on operational risk and dependency constraints. Deployment planning includes ownership, rollback considerations, and progress reporting.

[07]

[07]We document standards, review processes, and stewardship responsibilities needed to maintain content quality after remediation. Teams receive a clearer operating model for ongoing governance and quality control.

[08]

[08]Where needed, we establish monitoring and recurring review mechanisms to detect new content debt early. This supports long-term maintainability as the platform and publishing model continue to evolve.

Well-structured cleanup work improves the reliability of the content layer that supports search, publishing, analytics, migration, and governance. The impact is operational and architectural: teams work with cleaner inputs, platform decisions become easier to execute, and long-term content debt is reduced.

Normalized metadata, taxonomy usage, and structure reduce variation across teams and channels. This makes content easier to manage, review, and reuse in enterprise publishing environments.

Cleaner metadata and more consistent classification improve the quality of search indexing and retrieval. Users and internal teams can find relevant content with less noise and fewer false matches.

Editorial and platform teams spend less time correcting repetitive content issues manually. Standardized remediation workflows reduce rework and make quality management more predictable.

Cleanup work converts governance intent into enforceable operational rules. This helps teams apply standards more consistently across distributed publishing models and reduces policy drift over time.

Content estates that have been assessed and normalized are easier to map, transform, and migrate. This lowers uncertainty before replatforming and reduces the volume of avoidable exceptions during delivery.

Structured and consistent content attributes improve the quality of reporting, segmentation, and downstream analysis. Data teams can work with more dependable signals from the content layer.

A cleaner content estate is easier to evolve because structural issues are identified and addressed systematically. This supports future changes in architecture, workflows, and channel strategy with less friction.

AI systems depend on content that is structured, classified, and governed with reasonable consistency. Cleanup improves the quality of source material for summarization, classification, recommendation, and automation use cases.

This service often connects with platform audit, content architecture, governance, and data quality work across enterprise digital ecosystems.

Structured content transformation for enterprise platforms

Governed AI workflows for metadata quality

Structured metadata and classification engineering

Structured migration workflows with LLM-assisted transformation

Governed automation across content and operations

Composable DXP content architecture and API-first platform design

Structured schemas for an API-first content strategy

Enterprise content migration with API-first content delivery

Common questions about architecture, operations, integration, governance, risk, and engagement for AI-assisted enterprise content cleanup.

AI content cleanup should be treated as a platform capability, not just an editorial task. In enterprise environments, content quality affects search, personalization, analytics, migration, accessibility, and governance. When metadata, taxonomy, and structure are inconsistent, downstream systems inherit those inconsistencies. That means the cleanup process needs to align with the architecture of the CMS or DXP, the content model, workflow design, and any connected systems that consume content. Architecturally, the work usually sits between content storage and operational use. It relies on audit inputs, classification logic, remediation rules, and validation controls. In some cases, cleanup is executed through APIs, batch jobs, or workflow automation. In others, it is introduced as a governed review layer inside editorial operations. The right model depends on content volume, system maturity, and risk tolerance. The important point is that cleanup should reinforce platform structure rather than bypass it. If AI is used without clear rules, review checkpoints, and ownership boundaries, the result can be more inconsistency rather than less. A sound architecture makes remediation traceable, repeatable, and compatible with long-term governance.

The approach is well suited to structural and repeatable issues that appear across large content estates. Common examples include incomplete metadata, inconsistent field values, taxonomy drift, duplicate or near-duplicate content, formatting anomalies, broken classification logic, outdated lifecycle states, and inconsistent naming conventions. It can also help identify content that should be archived, merged, restructured, or sent for human review. The most effective use cases are those where patterns can be defined and validated. For example, if teams have used multiple labels for the same concept over time, taxonomy alignment rules can standardize that usage. If metadata fields are inconsistently populated, AI-assisted analysis can help classify gaps and suggest normalized values. If duplicate content exists across business units, similarity analysis can surface consolidation candidates. However, not every issue should be automated. Content that carries legal, regulatory, or highly contextual meaning often requires stronger human oversight. The goal is not to automate every decision, but to separate repeatable remediation from judgment-heavy editorial work. That balance is what makes the process scalable without weakening quality control.

Large-scale cleanup is usually managed as a phased operational program rather than a single batch exercise. The estate is first segmented by content type, business domain, risk level, or platform dependency. This allows teams to prioritize high-impact areas such as search-critical content, migration candidates, or heavily reused content models. A phased model also makes it easier to validate rules before applying them broadly. Operationally, the work often combines automated analysis, rule-based remediation, and human review. Some actions can be safely executed in batches, such as metadata normalization or taxonomy mapping where the rules are clear. Other actions need editorial or governance review, especially where content meaning, ownership, or retention decisions are involved. Review queues and exception handling are important because they prevent edge cases from being forced into unsuitable automation paths. Reporting is also central to delivery. Teams need visibility into scope, issue categories, remediation status, and quality outcomes. Without that, cleanup becomes difficult to govern and hard to sustain. The most effective operating models treat cleanup as part of content operations, with clear ownership, measurable progress, and controls that continue after the initial remediation effort.

Yes, but it requires careful sequencing and workflow design. The main risk is not the cleanup activity itself, but how and when changes are introduced into active publishing operations. If remediation is applied without considering editorial calendars, ownership boundaries, or workflow dependencies, teams may lose confidence in the process or encounter avoidable rework. A low-disruption model usually starts with audit and classification work that does not alter live content. From there, remediation is grouped into categories based on risk. Low-risk changes, such as standardizing controlled metadata values, can often be handled in batches with QA checks. Higher-risk changes, such as restructuring content or consolidating duplicates, are typically routed through review workflows with clear approval steps. It is also useful to align cleanup windows with operational rhythms. Some organizations schedule remediation around release cycles or content freezes. Others use pilot domains to test rules before wider rollout. The key is to make changes observable, reversible where necessary, and clearly owned. When cleanup is integrated into existing governance and publishing practices, disruption can be kept low even in large estates.

Integration depends on the capabilities of the platform and the maturity of the content operations model. In many cases, cleanup can be connected through APIs, export pipelines, workflow tools, or administrative interfaces that allow structured updates to metadata, taxonomy, and content attributes. Some platforms support direct workflow integration, where flagged items are routed into editorial review queues before changes are approved. For enterprise CMS and DXP environments, integration usually needs to account for content types, field constraints, localization, permissions, and publishing states. Cleanup logic should respect the platform’s content model rather than operate as an external overlay that ignores structural rules. This is especially important when content is reused across channels or consumed by search, personalization, or analytics systems. A practical integration model often separates analysis from execution. AI-assisted tooling can analyze content externally, but remediation actions should be applied through governed platform mechanisms wherever possible. That keeps the process auditable and reduces the chance of introducing invalid states. Integration is most effective when it aligns with existing workflows, validation rules, and environment controls rather than bypassing them for speed.

Yes. Cleanup is often one of the most useful preparatory activities before migration or replatforming because it reduces uncertainty in the source estate. When content is duplicated, poorly classified, or inconsistently structured, migration teams spend more time handling exceptions, defining one-off mappings, and moving low-value material into the new platform. Cleanup helps reduce that noise before transformation begins. The work can support migration in several ways. It can identify content that should be archived rather than moved, normalize metadata needed for mapping, align taxonomy terms to target models, and surface structural issues that would otherwise appear late in delivery. It also helps teams understand the actual condition of the estate, which is often different from what legacy documentation suggests. That said, cleanup should be scoped in relation to migration goals. Not every issue needs to be resolved before a move. The most effective programs focus on the content domains that matter most to the target architecture, user journeys, and governance model. In that context, cleanup becomes a risk-reduction and decision-support capability rather than an open-ended editorial exercise.

Governance is maintained by defining explicit rules, review boundaries, and accountability before AI-assisted remediation is applied. AI can help classify, group, and suggest changes at scale, but it should not replace governance decisions about taxonomy ownership, metadata standards, retention policy, or content quality thresholds. Those decisions need to be established by the organization and reflected in the remediation logic. In practice, this means separating recommendation from approval. AI may identify likely duplicates, propose normalized metadata values, or detect classification anomalies, but governed workflows determine which changes can be automated, which require review, and which should be excluded. Validation checkpoints, sampling, and exception reporting are important because they make the process observable and auditable. Governance also depends on stewardship after the initial cleanup. If standards are not embedded into workflows, content debt will return. That is why the engagement usually includes controls such as metadata validation, taxonomy management processes, editorial guidance, and quality monitoring. AI can accelerate remediation, but governance is what makes the results durable and trustworthy over time.

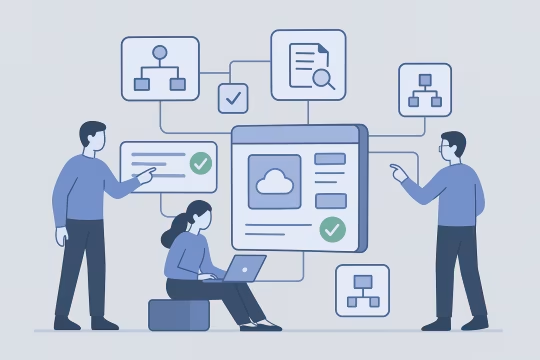

Ownership is usually shared, but it should be clearly structured. Platform teams often own the technical mechanisms, integration points, and workflow controls. Content operations or editorial governance teams typically own standards for metadata, taxonomy, lifecycle rules, and review practices. Product owners or digital leadership may help prioritize domains based on business impact, migration needs, or platform strategy. What matters most is avoiding fragmented accountability. If technical teams run cleanup without governance input, the process may optimize for speed while weakening semantic quality. If editorial teams own the work without platform support, remediation may remain manual and difficult to scale. A cross-functional model is usually the most effective because it combines system knowledge, content expertise, and operational decision making. For larger estates, it is useful to define a steering layer and an execution layer. The steering layer sets policy, scope, and quality thresholds. The execution layer manages audits, remediation workflows, QA, and reporting. This structure helps organizations move efficiently while preserving control over standards and long-term maintainability.

The main risks are over-automation, weak validation, and poor alignment with governance. If AI-generated classifications or remediation actions are applied without clear rules, the organization may introduce new inconsistencies instead of removing old ones. This is especially risky in content estates with complex taxonomy, regulatory sensitivity, or multiple downstream consumers. Another risk is treating cleanup as a purely technical batch process. Content often carries contextual meaning that is not obvious from structure alone. Duplicate detection, for example, may identify similar items that still need to remain separate for legal, regional, or operational reasons. Metadata normalization can also create problems if controlled vocabularies are not well defined or if legacy exceptions are ignored. Operational risk should also be considered. Changes applied directly to live systems without sequencing, rollback planning, or QA can disrupt editorial teams and reduce trust in the process. The way to manage these risks is through phased delivery, explicit remediation rules, human review for higher-risk cases, and strong reporting. AI is useful when it accelerates governed decisions, not when it replaces them without sufficient control.

Validation usually combines rule testing, QA sampling, stakeholder review, and exception analysis. Before broad rollout, remediation logic is tested against representative content sets to confirm that the intended changes behave correctly across different content types and edge cases. This is important because enterprise estates often contain legacy anomalies that are not obvious during initial analysis. Sampling is a practical control for large-scale work. Rather than manually reviewing every item, teams can inspect statistically meaningful subsets across issue categories, business domains, and risk levels. This helps verify that metadata normalization, taxonomy mapping, duplicate identification, or structural changes are producing reliable results. Where confidence is lower, the workflow can route items into manual review instead of automatic execution. Safety also depends on traceability. Teams should be able to see what rules were applied, what changed, what was excluded, and what remains unresolved. In mature implementations, validation is not a single checkpoint but a recurring part of the process. That approach makes cleanup more dependable and allows organizations to improve remediation logic over time rather than assuming the first pass is complete.

A typical engagement delivers more than a list of content issues. It usually includes an assessment of the content estate, a categorized view of cleanup priorities, remediation rules for metadata and taxonomy, duplicate or redundancy analysis, workflow recommendations, QA methods, and a phased execution plan. Depending on scope, it may also include pilot remediation, reporting dashboards, and governance guidance for ongoing quality control. The exact outputs depend on the platform context and the reason for the work. If the primary goal is migration readiness, the engagement may focus on retention decisions, mapping support, and structural normalization. If the goal is operational improvement, the emphasis may be on metadata quality, taxonomy consistency, and editorial workflow integration. In both cases, the work should produce actionable mechanisms rather than abstract recommendations. For enterprise teams, documentation and traceability are important deliverables as well. Stakeholders need to understand what rules were defined, how decisions were made, and how quality will be maintained after the initial cleanup. The most useful engagements leave the organization with a clearer operating model, not just a one-time remediation output.

The decision is usually based on risk, repeatability, and semantic sensitivity. Tasks that follow clear patterns and controlled rules are good candidates for automation or semi-automation. Examples include standardizing metadata values, applying known taxonomy mappings, identifying likely duplicates for review, or flagging incomplete records. These activities benefit from scale and consistency when the rules are well defined. Human review becomes more important when content meaning is ambiguous, when legal or regulatory implications exist, or when business context affects the decision. For example, two similar pages may look redundant from a similarity model but still need to remain separate because they serve different audiences or jurisdictions. In those cases, AI can support triage, but final decisions should remain with accountable teams. A practical model often uses tiers. Low-risk actions can be automated with QA checks. Medium-risk actions can be proposed by AI and approved through workflow. High-risk actions remain manual, supported by analysis and reporting. This tiered approach allows organizations to gain efficiency without losing control over quality and governance.

Collaboration usually begins with a focused discovery phase that clarifies the condition of the content estate, the operational pain points, and the platform objectives behind the cleanup effort. This often includes stakeholder interviews, a review of CMS or DXP structure, sample content analysis, governance documentation, and an initial assessment of metadata, taxonomy, duplication, and workflow issues. The purpose is to establish a realistic view of scope before remediation rules are defined. From there, the engagement is typically shaped around a priority use case. That might be migration readiness, search improvement, governance reinforcement, or reduction of editorial overhead. A pilot domain or representative content set is often selected so the team can test audit methods, validate cleanup logic, and confirm where automation is appropriate. This helps reduce uncertainty before broader rollout. Early collaboration works best when technical and operational stakeholders are involved together. Platform teams, governance leads, and content operations teams usually need a shared view of risk, ownership, and success criteria. Once that alignment is in place, the work can move into structured audit, remediation design, and phased execution with clearer expectations and stronger control.

These case studies show how large CMS and DXP estates were audited, consolidated, and remediated to restore structure, governance, and safer editorial operations. They are especially relevant for AI content cleanup because they demonstrate real delivery around builder-content sanitization, replacement of risky embedded code, migration mapping, and centralized workflow control. Together, they provide measurable proof that content quality and governance improvements can be implemented at scale across fragmented enterprise environments.

These articles cover the content modeling, governance, and migration decisions that often shape AI-assisted cleanup work. They are useful for understanding how metadata, taxonomy, redirects, search, and component contracts affect remediation at scale.

Let’s review your content structure, metadata quality, and governance model to define a practical cleanup and normalization plan.