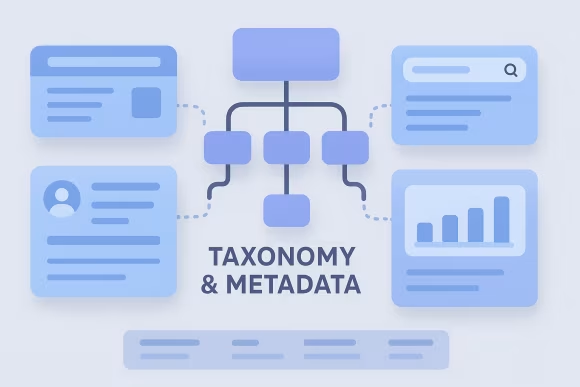

Headless content modeling services define the structured schema that connects editorial workflows to API delivery. This includes headless CMS content type design, field semantics, relationships, taxonomy, localization rules, and validation constraints so content can be reused across web, apps, and downstream systems without duplicating effort or creating channel-specific variants.

Organizations need this capability when multiple teams ship features against the same CMS, when frontends are decoupled (e.g., Next.js/React), or when content is consumed by integrations such as search, CDP, analytics, or commerce. Without a clear model and content schema governance, APIs become unstable, editorial guidance is inconsistent, and platform changes create cascading rework.

A well-architected model acts as a platform contract: it makes content predictable for developers, enforceable for editors, and evolvable for architects. It supports scalable headless CMS architecture by separating presentation from structure, enabling versioned change management, and providing a foundation for governance, testing, and automation across the content supply chain.

[01]

[01]