[01]

[01]Discovery

We align on business objectives, analytical questions, platform scope, and known data issues. This phase identifies the systems, datasets, and stakeholder groups that matter most to the engagement.

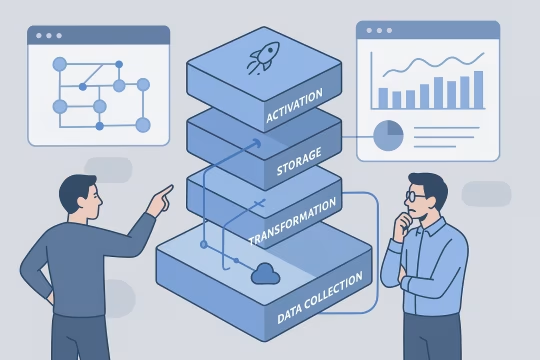

AI-assisted CDP data analysis helps organizations interpret large volumes of customer, event, identity, and consent data across complex platform ecosystems. It combines structured analytics methods with AI-supported exploration to identify data quality issues, segmentation gaps, behavioral patterns, taxonomy inconsistencies, and operational bottlenecks that are difficult to detect through manual review alone.

As customer data platforms mature, teams often inherit fragmented event models, overlapping audience logic, inconsistent identity stitching, and reporting layers that do not fully reflect how data moves through the platform. This creates uncertainty for product, marketing, analytics, and engineering teams that depend on reliable customer data to make operational and strategic decisions.

A structured analysis capability supports scalable platform architecture by making data flows, dependencies, and anomalies easier to understand. It helps teams evaluate how datasets are collected, transformed, governed, and activated across the wider ecosystem. The result is a clearer view of platform behavior, stronger analytical confidence, and a more reliable basis for roadmap planning, data governance, and downstream integration work.

As customer data environments expand, organizations often accumulate multiple event sources, identity records, consent states, audience definitions, and reporting layers without a consistent analytical model. Data may exist across CDPs, analytics tools, warehouses, activation platforms, and operational systems, but the relationships between those datasets are not always visible. Teams can access large volumes of information while still lacking confidence in what the data actually represents, how it was transformed, or whether it is suitable for decision-making.

This creates architectural and operational strain. Analytics teams spend time validating schemas, tracing event lineage, and reconciling audience logic instead of producing insight. Data engineers are pulled into repeated investigations around missing attributes, inconsistent identifiers, and pipeline anomalies. Product and marketing stakeholders may rely on segments or reports that are technically available but structurally weak, because identity stitching, consent handling, or event taxonomy has drifted over time.

The result is slower delivery, duplicated analysis effort, and increased risk in downstream use cases such as personalization, attribution, lifecycle automation, and customer reporting. Without a disciplined analysis layer, platform decisions are made on incomplete understanding, and technical debt accumulates inside the customer data ecosystem.

We review the business model, data landscape, platform objectives, and current analytical pain points. This establishes which datasets, systems, and operational questions should shape the analysis scope.

We map CDP inputs, event streams, identity records, consent data, and downstream consumers. This creates a working model of how customer data enters, moves through, and exits the platform ecosystem.

We inspect event structures, attribute definitions, naming conventions, and data relationships. The goal is to identify inconsistencies, missing context, and structural patterns that affect analysis quality.

We use AI-supported workflows to surface anomalies, repeated patterns, segmentation issues, and hidden dependencies across large datasets. This accelerates investigation while keeping outputs grounded in verifiable data structures.

Findings are validated against pipeline behavior, reporting outputs, and stakeholder usage patterns. This helps distinguish theoretical issues from problems that materially affect operations and decision-making.

We organize findings into architectural themes, data quality concerns, analytical opportunities, and governance implications. The output is structured for technical and operational stakeholders rather than raw observation lists.

We define practical next steps across data modeling, instrumentation, segmentation, governance, and platform operations. Recommendations are prioritized based on risk, dependency, and implementation effort.

This service focuses on the technical interpretation of customer data platforms and their surrounding analytics infrastructure. It combines dataset inspection, AI-assisted pattern analysis, and platform-aware evaluation to improve visibility across event collection, identity handling, segmentation logic, and operational data quality. The emphasis is on analytical structure, traceability, and maintainable decision support for enterprise teams working across complex customer data ecosystems.

Delivery is structured to move from platform understanding to dataset analysis, validation, and prioritized recommendations. The model supports collaboration between data, engineering, analytics, and operational stakeholders while keeping outputs grounded in technical evidence.

[01]

[01]We align on business objectives, analytical questions, platform scope, and known data issues. This phase identifies the systems, datasets, and stakeholder groups that matter most to the engagement.

[02]

[02]We catalogue available sources, schemas, identifiers, consent fields, and downstream consumers. This creates a practical baseline for analysis and highlights where visibility is incomplete before deeper investigation begins.

[03]

[03]We examine how customer data is collected, transformed, stored, and activated across the platform landscape. The review focuses on dependencies, lineage, and structural decisions that influence analytical reliability.

[04]

[04]We perform structured dataset review using AI-assisted exploration alongside conventional analytical methods. This phase surfaces anomalies, inconsistencies, segmentation issues, and operational patterns across large volumes of data.

[05]

[05]Findings are checked against pipeline behavior, reporting outputs, and stakeholder workflows. This ensures that recommendations reflect actual platform conditions rather than isolated data artifacts.

[06]

[06]We translate analysis into prioritized technical actions across modeling, governance, instrumentation, and operations. Recommendations are organized by impact, dependency, and implementation complexity.

[07]

[07]We review findings with engineering, analytics, and product stakeholders to confirm relevance and ownership. This phase helps convert analysis into a shared understanding of platform priorities.

[08]

[08]Where needed, we define next-step workstreams such as pipeline remediation, governance improvements, or segmentation redesign. This supports continuity between analysis and implementation.

The primary value of this service is improved clarity across customer data operations and architecture. Better analytical visibility supports more reliable decisions, more efficient engineering effort, and reduced uncertainty in downstream activation and reporting.

Teams gain a more accurate view of what customer data exists, how it is structured, and where it moves. This reduces time spent interpreting undocumented relationships across CDP and analytics environments.

Audience definitions become easier to validate when underlying attributes, events, and identity logic are properly analyzed. This improves confidence in activation, reporting, and lifecycle decision-making.

Repeated manual analysis often consumes engineering and analytics capacity. A structured review process shortens the time needed to locate anomalies, trace dependencies, and explain inconsistent outputs.

Analysis of identity, consent, and attribute usage helps organizations understand where governance controls are weak or ambiguous. This creates a stronger basis for policy enforcement and operational accountability.

Architecture and roadmap choices are more effective when teams understand the actual condition of their customer data ecosystem. This reduces the risk of investing in downstream features on top of unstable data foundations.

Data issues that remain hidden can affect reporting, activation, and customer experience workflows. Earlier detection of structural problems reduces the likelihood of silent failures and misaligned business actions.

Analytics, product, and engineering teams spend less time reconciling conflicting interpretations of the same data. Shared analysis improves collaboration and shortens the path from observation to action.

This service often connects to adjacent work in customer data architecture, governance, event design, and analytics platform engineering.

Customer analytics platform implementation for governed metrics and behavioral analytics

Unified customer profile architecture and insight-ready datasets

Scalable enterprise audience segmentation models and cohort definition frameworks

CDP identity resolution design for unified customer profiles

Cross-channel identity stitching with governed matching rules

Enterprise event streaming architecture and analytics-ready data model design

Airflow data orchestration for CDP ingestion and transformation

CDP monitoring and data reliability for customer data

How to architect privacy and consent for CDP pipelines

Automated reporting workflows and structured insight generation

CDP audience activation with governed delivery to channels

CDP event pipeline architecture and identity foundations

Common questions about architecture, operations, integration, governance, risk, and engagement for AI-assisted analysis of customer data platforms and analytics ecosystems.

AI CDP data analysis sits between raw data collection and downstream decision-making. In enterprise environments, customer data usually moves across event collection layers, CDPs, warehouses, BI tools, activation platforms, and governance controls. The analysis function helps teams understand whether those layers are producing coherent, trustworthy, and operationally useful data rather than simply moving records from one system to another. From an architectural perspective, the work focuses on data relationships, schema quality, identity behavior, segmentation dependencies, and lineage visibility. AI can accelerate exploration of large datasets and highlight patterns that would otherwise require extensive manual review, but it should operate within a disciplined engineering process. That means findings are validated against actual platform structures, transformation logic, and stakeholder usage. The value is not limited to reporting. It also informs platform design choices such as event model refinement, identity strategy, governance controls, and pipeline prioritization. In practice, this makes AI-assisted analysis a supporting capability for broader customer data architecture rather than a standalone analytics exercise.

This type of analysis can uncover structural issues that are difficult to see when teams only review dashboards or isolated datasets. Common examples include inconsistent event naming, missing payload context, duplicated profile attributes, weak identity stitching, conflicting audience logic, and unclear consent propagation across systems. These issues often exist for long periods because each one appears manageable in isolation, while the combined effect creates broader analytical instability. It can also expose architectural mismatches between systems. For example, a CDP may ingest events with one taxonomy while downstream reporting assumes another. Identity records may be merged in ways that support activation but distort analysis. Consent fields may exist in multiple systems without a clear operational source of truth. AI-assisted exploration is useful here because it can surface repeated patterns, outliers, and hidden dependencies across large volumes of records. The goal is not simply to list defects. It is to understand how those defects relate to platform design, operational processes, and downstream use cases. That makes the output useful for architects, data engineers, and product owners who need to prioritize remediation work.

For analytics and data operations teams, the main benefit is reduced time spent on repetitive investigation. In many organizations, teams repeatedly answer the same questions about missing events, inconsistent attributes, segment discrepancies, or unexplained reporting changes. AI-assisted analysis helps organize and accelerate that investigative work by scanning large datasets, identifying likely problem areas, and grouping related anomalies for review. Operationally, this improves triage. Instead of starting every issue from scratch, teams can work from a clearer picture of event structures, identity dependencies, consent states, and transformation behavior. That makes it easier to separate one-off data incidents from systemic platform problems. It also helps teams document recurring issues in a more structured way, which is useful for backlog planning and governance discussions. The service does not replace core data engineering or analytics operations. It supports them by making platform behavior easier to interpret. Over time, this can reduce investigation overhead, improve communication between technical and non-technical stakeholders, and create a more stable basis for reporting, segmentation, and activation work.

Yes. While many engagements begin as a focused assessment, the same analytical methods can support ongoing platform operations. Customer data ecosystems change continuously as new events are introduced, audience logic evolves, consent requirements shift, and downstream systems are added or reconfigured. A one-time review can identify current issues, but recurring analysis helps teams detect drift before it becomes operationally expensive. In an ongoing model, analysis can be aligned with release cycles, instrumentation changes, governance reviews, or quarterly platform health checks. AI-assisted workflows are particularly useful when data volumes are large and patterns need to be re-evaluated regularly. They can help surface changes in schema behavior, segment composition, identity matching, or event completeness without requiring the same level of manual effort each time. The important point is governance. Ongoing analysis should be tied to clear ownership, validation rules, and escalation paths. When integrated into operations in a disciplined way, it becomes a practical support layer for platform reliability and continuous improvement rather than an isolated diagnostic exercise.

The analysis is designed to work across the existing tool landscape rather than replace it. Most organizations already have a combination of CDP interfaces, event collection tools, warehouses, BI platforms, and operational reporting systems. The role of the analysis is to interpret the data moving through those systems, compare structures and outputs, and identify where assumptions break down between one layer and another. In practice, this means reviewing exports, schemas, event definitions, profile attributes, audience logic, and reporting outputs from the systems already in use. AI-assisted methods can help summarize patterns and detect anomalies across these sources, but the process remains grounded in the actual technical environment. The objective is to understand interoperability, not to create a parallel analytics stack. This is especially valuable when different teams rely on different tools for different purposes. Marketing may trust one view of the customer, analytics another, and engineering a third. Integrated analysis helps reconcile those perspectives by tracing how data is represented and transformed across the platform ecosystem.

Yes, and that is often necessary for a meaningful result. Event data on its own can show behavioral activity, but enterprise customer data decisions usually depend on how events connect to identities, profiles, permissions, and activation rules. If identity and consent data are excluded, teams may misinterpret what the event layer actually supports in production. The analysis typically examines identifier quality, profile linkage, merge behavior, consent attributes, preference states, and how those elements are propagated across systems. This helps reveal whether customer records are analytically coherent and whether downstream segmentation or reporting is being shaped by hidden identity or permission constraints. AI-assisted exploration can be useful for detecting unusual combinations, duplicated states, or inconsistent field usage across large datasets. Including identity and consent data also improves governance insight. It helps teams understand whether data is not only available, but also usable in a compliant and operationally consistent way. That broader context is important for CDP product owners, data engineers, and marketing operations teams alike.

Governance is essential because AI can accelerate interpretation, but it does not remove the need for controlled data access, validation, and accountability. Customer data environments often contain sensitive profile attributes, consent states, and behavioral records that require clear handling rules. Any AI-assisted workflow should operate within established security, privacy, and access controls, with careful attention to what data is exposed, transformed, or summarized. There is also a governance question around analytical trust. AI-generated observations should not be treated as authoritative without verification. Findings need to be checked against source schemas, pipeline logic, and stakeholder context. This is particularly important when analysis informs segmentation, reporting, or roadmap decisions, because incorrect interpretation can create downstream operational issues. A strong governance model usually includes scoped data access, documented prompts or analytical methods where relevant, validation steps, auditability of findings, and clear ownership for remediation decisions. In enterprise settings, the goal is to use AI as an analytical accelerator inside a controlled engineering process, not as an ungoverned decision-maker.

Findings should be documented in a way that supports both immediate remediation and long-term platform governance. That usually means organizing outputs by issue type, affected systems, data domains, severity, and downstream impact. For example, an event taxonomy problem should be linked to the relevant collection layer, transformation logic, reporting dependency, and operational owner rather than described as an isolated observation. Documentation is most useful when it separates evidence from interpretation. Teams need to see what was observed in the data, why it matters architecturally, and what action is recommended. This structure helps analytics teams, engineers, and product owners work from the same source of truth even if their priorities differ. AI-assisted analysis can help generate summaries and pattern groupings, but the final documentation should remain precise and reviewable. Well-structured documentation also supports governance reviews, backlog planning, and future re-analysis. It creates continuity between one assessment and the next, making it easier to track whether issues were resolved, whether drift has returned, and where platform controls need to be strengthened.

The service helps reduce several forms of operational and architectural risk. One of the most common is decision risk: teams act on reports, segments, or customer views that appear valid but are built on inconsistent events, weak identity logic, or incomplete consent handling. That can affect campaign execution, product analysis, customer communication, and strategic planning. It also reduces platform risk by exposing hidden dependencies and structural weaknesses before they cause larger failures. For example, if a key audience depends on unstable attributes, or if reporting relies on events with inconsistent payloads, those issues can remain invisible until a release, migration, or governance review creates disruption. AI-assisted analysis improves the speed at which such patterns can be identified, especially in large and fragmented environments. Another important area is resource risk. Without structured analysis, engineering and analytics teams may spend substantial time on repeated investigations with limited cumulative learning. A disciplined analytical process creates reusable understanding, which lowers the cost of troubleshooting and improves prioritization across the customer data roadmap.

Yes. AI can accelerate exploration and summarization, but it can also introduce interpretation errors if outputs are accepted without technical validation. Customer data platforms contain nuanced relationships between events, identities, consent states, and downstream business logic. An AI model may identify patterns that appear meaningful while missing operational context, source system constraints, or implementation-specific exceptions. That is why AI-generated analysis should be treated as a support mechanism rather than a final authority. Findings need to be checked against schemas, transformation rules, platform documentation, and stakeholder knowledge. In enterprise environments, this validation step is not optional. It is what turns AI-assisted observation into reliable engineering insight. Another risk is over-expansion of scope. Because AI can process large volumes of information quickly, teams may generate more observations than they can realistically act on. A disciplined engagement focuses on the questions that matter most to architecture, operations, and governance. Used carefully, AI improves analytical efficiency. Used uncritically, it can create noise and false confidence.

A typical engagement delivers a structured view of the customer data environment rather than a generic summary. Outputs often include source and dependency mapping, schema and event model observations, identity and consent analysis, segmentation findings, data quality issues, and prioritized recommendations. The exact format depends on the scope, but the goal is always to make the state of the platform easier to understand and act on. For technical stakeholders, the most useful deliverables usually connect findings back to architecture and operations. That means documenting where issues originate, which systems are affected, what downstream consequences exist, and what remediation paths are realistic. For product and operational stakeholders, the output should clarify which analytical assumptions are safe, which are weak, and where platform changes may be needed. In some cases, the engagement also defines follow-on work such as event redesign, governance improvements, pipeline remediation, or segmentation restructuring. The analysis itself is valuable, but its practical usefulness depends on whether teams can convert findings into a prioritized and owned set of next steps.

Scope is usually determined by combining platform criticality with analytical uncertainty. In large customer data ecosystems, it is rarely efficient to analyze every source and use case at the same depth from the start. Instead, the work is prioritized around the systems, datasets, and decisions that have the greatest operational importance or the highest level of current ambiguity. That often means beginning with a subset of event streams, identity domains, audience models, or reporting dependencies that are central to business operations. The team then evaluates where those areas connect to other systems and whether the scope needs to expand. AI-assisted methods are useful because they can accelerate exploration across broad datasets, but the engagement still needs clear boundaries to remain actionable. A good scoping process also considers stakeholder needs. Data engineers may need lineage clarity, analytics teams may need schema confidence, and CDP owners may need segment validation. The engagement is most effective when those priorities are aligned into a shared set of technical questions and decision points.

Collaboration typically begins with a short discovery phase focused on context rather than immediate analysis. The first step is to understand the current customer data landscape, the systems involved, the main operational questions, and the areas where teams have low confidence in the data. This usually includes conversations with data, analytics, product, and operational stakeholders, along with an initial review of available documentation, schemas, and platform outputs. From there, the engagement defines a practical scope. That may involve selecting priority datasets, event domains, identity models, consent fields, or downstream use cases for initial review. Access requirements, governance constraints, validation methods, and expected outputs are also clarified early so the work can proceed in a controlled way. This is especially important when AI-assisted workflows are part of the analysis process. Once scope and access are agreed, the work moves into source mapping and structured analysis. Starting this way helps ensure that the engagement is tied to real platform questions, that findings can be validated, and that the resulting recommendations are relevant to both technical and operational decision-makers.

These case studies show how customer data, analytics instrumentation, and tracking architecture were implemented in real delivery environments. They are especially relevant for understanding how CDP-related analysis connects to event quality, behavioral measurement, segmentation inputs, and reporting visibility across complex digital ecosystems. Together, they provide concrete proof of how data collection and analytics foundations can be improved to support better decisions and downstream activation.

These articles expand on the governance, identity, and event-model issues that shape CDP analysis work. They are useful for understanding why customer data quality breaks down, how activation depends on ownership and confidence, and how event contracts stay reliable across channels.

Let’s review your CDP, event, identity, and analytics landscape to identify where AI-assisted analysis can improve visibility, governance, and platform decision-making.