When a Drupal platform goes down, the first question is rarely technical. It is operational: how quickly do we need this service back, and how much data can we afford to lose? Those two questions sit behind recovery time objective (RTO) and recovery point objective (RPO), but in many organizations they remain vague until a real outage forces urgent decisions.

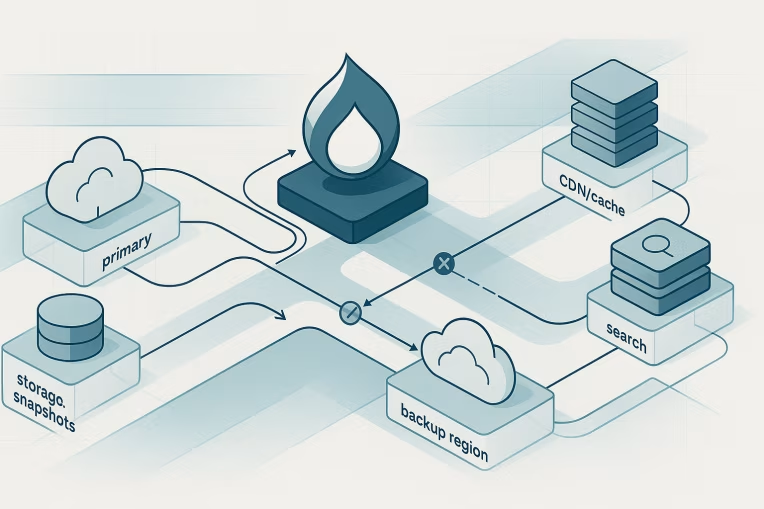

That is where disaster recovery planning often breaks down. Teams may have backups, infrastructure-as-code, database snapshots, and even a secondary environment, yet still lack a credible answer to what recovery actually looks like under pressure. In practice, recovery depends on more than restoring a database. Drupal estates often rely on files, object storage, search, CDN behavior, identity systems, content workflows, upstream integrations, and people who know which sequence of steps matters.

For enterprise Drupal platforms, disaster recovery planning is best approached as a design exercise and an operating model decision. The goal is not to produce a perfect theoretical topology. The goal is to define recovery targets that reflect business priorities, validate whether the platform can realistically meet them, and make the gaps visible before an incident does.

Why backup success does not equal recovery readiness

A successful backup proves only one narrow thing: some data was copied somewhere else.

It does not prove that the platform can be restored within an acceptable time window. It does not prove that dependent services are available in the recovery environment. It does not prove that the restored platform will behave correctly once traffic returns.

That distinction matters because enterprise Drupal environments usually involve multiple recovery domains:

- Structured content and configuration in the database

- Public and private files, often in shared or object storage

- Search indexes and search service connectivity

- CDN configuration, cache invalidation behavior, and origin routing

- Identity and access dependencies such as SSO or external identity providers

- Integrations with downstream systems like CRM, DAM, personalization, analytics, or commerce services

- Deployment pipelines, secrets management, DNS, certificates, and observability tooling

A platform can have excellent retention practices for backups and still fail its business recovery target because one of those other domains was omitted from planning.

This is also where teams confuse retention with recoverability. Retention answers how long data is kept. Recoverability answers whether the service can be restored to a defined point within a defined time. They are related, but they are not interchangeable.

Defining RTO and RPO in business terms for Drupal estates

RTO and RPO should not start as infrastructure values.

They should start with business impact.

RTO is the maximum tolerable duration of service unavailability. RPO is the maximum tolerable amount of data loss measured in time. If a platform has an RPO of 15 minutes, the organization is saying that losing up to 15 minutes of newly created or changed data may be acceptable in that scenario.

For Drupal, those targets can vary significantly depending on the nature of the platform:

- A marketing site with infrequent content updates may tolerate longer RPO than a platform with continuous editorial publishing.

- A global content platform serving critical customer journeys may require a much tighter RTO than an internal knowledge portal.

- A headless Drupal backend feeding multiple consumer applications may need different recovery targets for the authoring layer, APIs, and edge delivery paths.

The practical way to define RTO and RPO is to ask business-oriented questions such as:

- Which user journeys are considered critical during an incident?

- What is the operational impact of one hour of downtime versus four hours?

- Which content changes or transactions would materially matter if lost?

- Does partial service availability count as recovery, or is full capability required?

- Are there different acceptable targets for public browsing, authoring, publishing, search, and authenticated access?

These answers usually reveal that a single platform may not have one universal recovery target. Different service tiers may need different objectives. For example, restoring read-only public access may be the first target, while authoring, search freshness, and nonessential integrations are restored in later phases.

That is often a more realistic planning model than assuming all functions recover simultaneously.

Mapping the real dependency chain: database, files, search, CDN, identity, integrations

Once the business target is defined, the next step is to map what the platform actually depends on.

This is where many recovery plans become more accurate very quickly. On paper, a Drupal site may appear to be an application and a database. In reality, the recovery chain is usually broader.

Start with the core layers:

- Application runtime: container platform, virtual machines, web tier, PHP runtime, and deployment artifacts

- Database: managed database service or self-managed cluster, replication model, snapshot strategy, restore process, and promotion steps

- Files: local shared filesystem, network-attached storage, or object storage for media and generated assets

- Cache layers: Drupal cache bins, reverse proxy, application cache, and CDN edge caches

Then map the external dependencies that can block or degrade recovery:

- Search: whether search can be rebuilt, how long reindexing takes, and whether search is required for essential journeys

- Identity: SSO, LDAP, OAuth, or other authentication dependencies for authors, administrators, or end users

- DNS and traffic management: failover routing, TTL assumptions, certificate management, and health-check behavior

- External integrations: CRM, DAM, PIM, translation services, personalization engines, analytics, commerce, or middleware

- Secrets and configuration services: whether the recovery environment can access the same secure dependencies

- Observability and incident tooling: logging, monitoring, alerting, and communication channels needed during recovery

For each dependency, document three things:

- Whether the dependency is required for minimum viable service

- Whether it has its own recovery commitment outside the Drupal team

- What happens if it is unavailable during failover or restore

That exercise is often more valuable than debating an abstract topology. It forces the organization to identify hidden single points of failure, ambiguous ownership, and unrealistic assumptions about what a “restored site” actually means.

Failure scenarios that change the recovery design

Recovery architecture should be shaped by plausible failure scenarios, not only by preferred infrastructure patterns.

Different incidents produce different constraints:

- A bad deployment may require rollback more than regional failover.

- Database corruption may make recent replicas unusable and shift emphasis to point-in-time restore.

- Object storage deletion or file inconsistency may affect media availability even if the application stack is healthy.

- CDN misconfiguration may make the platform appear down while origin systems remain intact.

- Identity-provider disruption may block authoring or authenticated experiences without affecting anonymous traffic.

- A regional infrastructure outage may require secondary-region promotion and traffic rerouting.

- An integration failure may break critical user flows even though Drupal itself is technically available.

These scenarios matter because they alter what counts as a workable recovery pattern.

For example, a warm standby environment can reduce application recovery time, but it does not automatically solve data corruption. Cross-region replication can improve failover posture, but it can also replicate bad writes. A static maintenance page at the edge may preserve some customer communication while origin restoration continues, but it does not restore editorial operations.

The right design therefore depends on what the organization is trying to survive.

A practical workshop question is: Which scenarios are we designing to recover from within our stated RTO and RPO, and which scenarios would fall outside those targets? That creates a more honest plan than implying universal resilience.

Recovery patterns for single-site, multisite, and headless Drupal platforms

There is no single correct disaster recovery pattern for every Drupal estate. The right approach depends on criticality, budget, operating maturity, dependency sprawl, and the consequences of recovery complexity itself.

For single-site Drupal platforms, common patterns range from restore-based recovery to pre-provisioned standby environments.

A restore-based model may be appropriate when the business can tolerate a longer RTO. In that approach, infrastructure is recreated or activated, the database is restored, files are attached or recovered, configuration is validated, and traffic is switched after checks pass.

A standby model can reduce recovery time by keeping a secondary environment partially or fully prepared. But it only works if teams also define:

- How data reaches the secondary environment

- How secrets and configuration remain current

- Whether files, search, and integrations are available there

- How traffic switching is performed and validated

For Drupal multisite, disaster recovery planning gets more complicated because infrastructure efficiency can conceal blast radius.

Multisite can centralize operational patterns, but it can also couple risk. A database issue, shared service outage, or deployment problem may affect multiple sites at once. Recovery planning therefore needs to define whether targets apply to the entire multisite estate, to site groups, or to selected priority tenants first. That kind of estate-level decision-making is closely related to Drupal platform strategy, especially when recovery commitments differ across business units.

Questions worth answering include:

- Are content, code, files, and databases isolated per site or shared?

- Can one site be restored independently?

- Is failover all-or-nothing, or can subsets of sites be recovered first?

- Which sites justify tighter RTO and RPO than others?

For headless Drupal platforms, the planning scope expands beyond Drupal availability.

The CMS may recover while downstream applications still fail because APIs, preview flows, frontend deployments, or edge configuration remain broken. Headless recovery design should explicitly address:

- API availability and authentication

- Content publishing and preview workflows

- Cache invalidation across consumer applications

- Search indexing and downstream data synchronization

- Whether a degraded mode exists for frontend applications if Drupal APIs are impaired

In headless environments, the most useful recovery target is often service-based rather than system-based. Instead of asking whether Drupal is up, ask whether the user-facing digital experience can serve critical journeys acceptably. That usually requires stronger alignment between Drupal, frontend, and content platform architecture decisions than many teams initially assume.

Runbooks, rehearsal cadence, and ownership boundaries

A recovery strategy is not complete until people know who does what under pressure.

That is where runbooks and ownership boundaries matter. In many enterprises, the Drupal team does not directly control the database platform, DNS, CDN, identity provider, cloud networking, or security approvals needed during failover. If those dependencies are outside the team, the recovery plan must reflect that reality.

A credible runbook should identify:

- Incident triggers that move the team from diagnosis to recovery execution

- Recovery decision-makers and escalation path

- Step sequence, including dependencies that must be confirmed before traffic changes

- Validation checks for application health, content integrity, media, authentication, and critical journeys

- Roll-forward or rollback criteria if the recovery attempt introduces new issues

- Communication expectations across engineering, operations, product, and business stakeholders

The runbook should also distinguish between technical restoration and business service restoration. A system can be technically online while still failing critical outcomes because search is empty, media is missing, or editorial access is unavailable.

Rehearsal cadence is equally important. If recovery steps are never practiced, timing estimates are usually optimistic. Even lightweight rehearsals can surface missing credentials, stale documentation, hidden manual dependencies, or assumptions about who is available.

A practical cadence might include:

- Periodic tabletop exercises for scenario review and decision logic

- Targeted technical drills for database restore, environment promotion, or traffic switching

- Post-change validation when architecture or vendor dependencies materially shift

- Periodic review of whether stated RTO and RPO still match business expectations

The goal is not constant disruption. It is keeping recovery knowledge current enough that the plan remains operational rather than ceremonial.

Common mistakes that make recovery targets fictional

Many published recovery targets are really aspirations. They become fictional when the architecture, tooling, or governance does not support them.

Common causes include:

- Setting RTO and RPO without business input or service tiering

- Assuming backups alone satisfy disaster recovery requirements

- Ignoring file storage, search, CDN, identity, or external integrations

- Treating replicated failure as resilience, especially in corruption scenarios

- Forgetting DNS, certificates, secrets, and access dependencies in secondary environments

- Assuming teams can execute complex failover from memory

- Defining targets for full service restoration when only partial restoration is achievable in the stated time

- Applying one recovery model to all sites regardless of criticality

- Neglecting multisite blast radius and shared-component failure modes

- Failing to revisit recovery assumptions after platform modernization or organizational change

The most important correction is honesty. A longer but credible RTO is more useful than an aggressive target that depends on untested steps and unavailable teams.

A practical checklist for recovery planning workshops

For enterprise Drupal teams, a recovery planning workshop can be productive if it stays concrete. Use a checklist like this to structure the discussion:

- Define the critical user journeys and business services the platform supports

- Set target RTO and RPO in business terms, not only infrastructure terms

- Separate minimum viable service from full feature restoration

- Inventory all required recovery dependencies: database, files, cache, search, CDN, identity, integrations, secrets, DNS, certificates, monitoring

- Identify which dependencies are owned by other teams or providers

- Document likely failure scenarios and note where each changes the recovery path

- Decide whether recovery relies on restore, standby, failover, phased service restoration, or a combination

- Clarify multisite or headless-specific considerations

- Write and version the runbook, including validation steps and communications

- Rehearse enough to test assumptions, timing, and handoffs

- Capture known gaps between target and current capability

- Assign owners and review dates for remediation work

The output of that workshop should not be a generic disaster recovery statement. It should be a decision record: what the organization is trying to recover, within what limits, using which assumptions, and with whose involvement.

Disaster recovery for Drupal is strongest when it is treated as part of platform architecture and service governance. Backups matter. Redundancy matters. But neither replaces clear recovery objectives, dependency awareness, and rehearsed execution. Teams working through large, integration-heavy estates often discover this during broader multisite modernization efforts, where shared components and rollout governance directly affect recovery realism.

If an incident tests the platform tomorrow, the value of planning will not come from having the most sophisticated diagram. It will come from knowing which services matter most, what recovery actually requires, and whether the stated RTO and RPO are grounded in reality.

Tags: Drupal, Drupal disaster recovery planning, Drupal RTO and RPO, enterprise Drupal resilience, Drupal failover strategy, Drupal backup architecture, Drupal platform operations