In enterprise headless environments, publishing speed is rarely limited by the CMS alone. The real bottleneck often appears after content is saved.

A page may be updated in the CMS, but the frontend is still serving a static artifact, the CDN still has an older response cached, a search index has not been refreshed, and downstream components are each using different assumptions about freshness. Editorial users experience this as a simple problem: "I published a change, but the site still looks stale." Engineering teams experience it as a more complex one: Which layers should be refreshed, in what order, and with what blast radius?

That is why cache invalidation deserves architectural attention in headless systems. It directly affects publishing latency, infrastructure cost, operational risk, and trust in the platform.

A good invalidation model does not try to make every layer instantly fresh at all times. Instead, it defines where staleness is acceptable, where it is not, and how events move predictably through the system.

Why cache invalidation becomes a publishing problem in headless platforms

Traditional coupled platforms often hide cache behavior behind one runtime and one content repository. Headless platforms usually do not. They split content management, rendering, delivery, and indexing into separate services and deployment concerns.

That separation brings flexibility, but it also means publishing becomes a distributed workflow.

When a content editor updates a landing page, several things may need to happen:

- the canonical content record changes in the CMS

- affected routes may need regeneration or revalidation

- edge or CDN caches may need partial purge or refresh

- shared data fragments may affect multiple pages

- search indexes may need re-crawl or targeted updates

- preview and published content boundaries must remain isolated

If these actions are not designed together, teams usually fall into one of two patterns:

- Over-purging, where every publish triggers rebuilds or broad CDN clears, causing cost spikes and unnecessary origin load

- Under-purging, where updates do not propagate correctly, leading to stale pages and declining editorial confidence

In other words, cache invalidation is not just a performance topic. It is part of the publishing architecture.

Where stale content actually lives: CMS, build layer, application cache, CDN, search

Many teams talk about "the cache" as if it were one thing. Enterprise headless stacks usually have several independent caching layers, each with different failure modes.

CMS layer

The CMS is the source of truth, but even it may expose content through APIs that use response caching, delivery environments, or eventual consistency patterns. If teams assume the CMS API is instantly coherent in all contexts, they may misdiagnose downstream problems.

Build or generation layer

If the frontend uses static generation, incremental regeneration, or precomputed route artifacts, staleness can live in generated output. This is often where publishing latency becomes visible, especially when a single content change affects many pages. In platforms built around static generation architecture, this layer often becomes the first place where invalidation policy and publishing expectations collide.

Application data cache

Modern frontend stacks often cache fetch responses, route data, fragments, or server-rendered results. These caches can exist in memory, distributed stores, or framework-managed layers. They may not align cleanly with page URLs, which makes invalidation more subtle than simple path purging.

CDN or edge cache

This is the most visible cache because it serves end users directly. It may cache whole HTML responses, API responses, images, or personalized variants. Purging here can restore freshness quickly, but if the origin or app cache is still stale, the CDN may simply refill with old data. That is why edge infrastructure architecture matters as much as application logic when teams define purge scope and cache keys.

Search index

Search often behaves like a cache with its own update lifecycle. If search indexing lags behind publishing, users can discover outdated titles, descriptions, or URLs even after the main site is refreshed.

The practical lesson is simple: freshness problems are usually multi-layer problems. Solving them requires a map of content propagation, not just a purge API.

Common invalidation patterns: full rebuilds, path-based purge, tag-based purge, time-based revalidation

Most enterprise implementations use a combination of invalidation strategies rather than a single technique.

Full rebuilds

This is the simplest operational model to understand. A publish event triggers a full site build and redeploy.

It works reasonably well when:

- the site is small

- content changes are infrequent

- deployment pipelines are fast

- stale content tolerance is low but rebuild costs are acceptable

It becomes problematic when:

- thousands of pages share common content dependencies

- publish frequency is high

- multiple locales or brands expand the build surface

- infrastructure cost and queue times matter

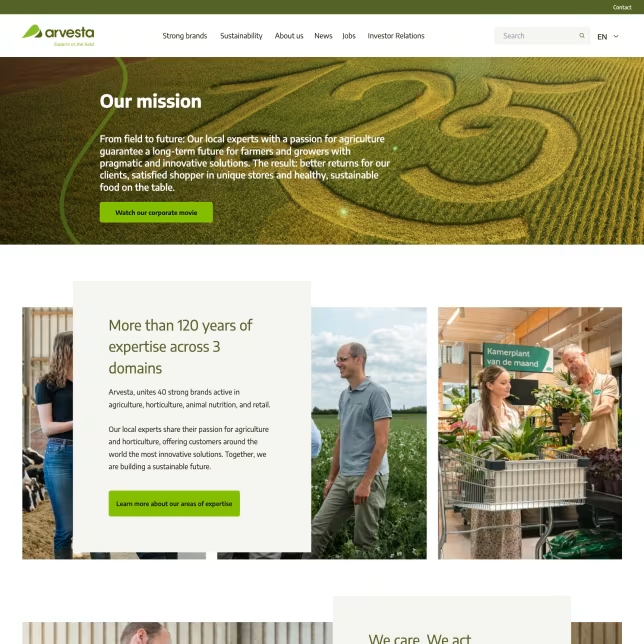

Full rebuilds are attractive because they reduce invalidation logic, but they often shift complexity into build throughput and incident recovery. Teams that have lived through this at scale often end up moving toward incremental patterns similar to the Alpro delivery model, where publishing efficiency matters across regions and content domains.

Path-based purge

This model invalidates specific URLs or route groups. It is intuitive because editors and developers both understand pages.

It works best when content-to-page relationships are direct and stable, such as:

- one article updating one route

- one product page mapping to one canonical URL

- one landing page corresponding to a known set of localized paths

Its limits appear when content is reused broadly. A single taxonomy update, promotion banner, footer change, or shared module may affect dozens or thousands of pages that are not obvious from one path list.

Tag-based purge

Tag-based invalidation associates cached content with logical keys such as content IDs, model types, taxonomies, campaigns, or shared components. When an event occurs, the system invalidates everything linked to those keys.

This model is often more scalable than pure path-based invalidation because it reflects content relationships rather than surface URLs. It is especially useful when the frontend caches fragments or data fetches that feed many routes.

The tradeoff is architectural maturity. Tags require disciplined modeling, consistent naming, and strong understanding of dependency boundaries. Without that, teams can create tags that are too broad to be useful or too narrow to be reliable.

Time-based revalidation

Time-based expiration uses freshness windows such as every few minutes or every hour. It reduces eventing complexity and can be acceptable for content that does not need immediate propagation.

This is often useful for:

- low-risk marketing content

- secondary navigation or recommendation blocks

- content feeds where minor lag is acceptable

- systems where webhook reliability is still maturing

However, time-based revalidation should be treated as a deliberate freshness policy, not an accidental fallback. If editors expect instant updates, a timer-based model will feel broken no matter how elegant the implementation is.

In practice, enterprise teams often combine these patterns:

- event-driven invalidation for high-value or high-visibility content

- time-based revalidation for lower-priority content

- selective path purges for page outputs

- tag-based invalidation for shared dependencies

How to map content relationships before defining purge scope

One of the most common mistakes is to define purge logic before defining dependency logic.

Before choosing how to invalidate, teams should map how content is reused and rendered. That means identifying not just which content types exist, but how they influence delivery.

A useful relationship model usually includes:

- direct page owners: content types that correspond to a canonical route

- shared components: banners, CTAs, navigation, footers, legal notices, promo strips

- reference-driven dependencies: taxonomies, authors, categories, labels, media metadata

- computed aggregations: listing pages, search results, related content blocks, campaign hubs

- locale and market variations: where one content event affects many regional outputs

A practical exercise is to take a handful of common publishing events and trace their downstream impact:

- updating an article body

- changing an author name

- replacing a global navigation item

- publishing a taxonomy term

- expiring a campaign banner

For each event, ask:

- Which routes can be affected?

- Which cached data objects can be affected?

- Which CDN objects might still be serving stale responses?

- Which search records or feeds should be refreshed?

- What is the acceptable freshness window for each output?

This mapping often reveals that invalidation scope should follow content dependency classes, not just content types.

For example, a product detail page update may justify a targeted path refresh, while a taxonomy rename may require broader invalidation because it affects search facets, listing pages, breadcrumbs, and metadata. Treating both as the same kind of event usually leads to either under-refresh or excessive purging.

Designing webhook and event flows for reliable cache refresh

Once dependency scope is understood, the next challenge is event flow design.

At a high level, the architecture should answer four questions:

- what event indicates a publish-worthy state change

- how the event is authenticated and validated

- what downstream actions are triggered

- how retries, ordering, and visibility are handled

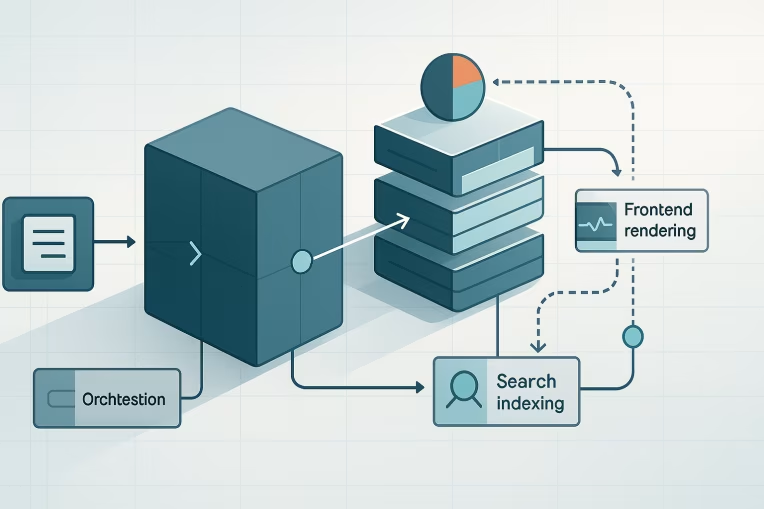

A common enterprise pattern looks like this:

- The CMS emits a publish event or webhook.

- An integration layer validates the event and normalizes payload details.

- The system resolves affected content relationships and determines purge scope.

- It triggers app-level revalidation, selective CDN purges, and search update workflows as needed.

- It records success, partial failure, and retry status for operations teams.

A few design principles matter here.

Prefer durable event handling over direct synchronous chains

If a CMS webhook calls the frontend directly and expects all downstream actions to complete synchronously, failures become hard to isolate. A queue or event-processing layer can improve resilience by decoupling publishing from refresh execution.

That does not mean every implementation needs a complex event bus. It does mean enterprise teams should avoid making editorial freshness depend on one brittle HTTP hop.

Normalize event semantics

CMS payloads are not always ideal for downstream systems. Normalize around concepts that the platform actually needs, such as:

- content ID

- content type

- environment

- locale

- publication state

- event timestamp

- correlation ID

This makes routing, debugging, and auditability easier.

Separate resolution from execution

Resolving what is affected is not the same as executing invalidation. Keep those concerns distinct.

- Resolution determines impacted paths, tags, fragments, and indexes.

- Execution performs the actual revalidation or purge actions.

This separation supports testing, replay, and future changes to delivery infrastructure without rewriting the full decision model.

Design for idempotency

Enterprise platforms frequently deliver duplicate webhooks, retries, or out-of-order events. Refresh actions should be safe to repeat. That is especially important when multiple publication operations happen within short intervals.

Failure modes: over-purging, under-purging, race conditions, preview leakage

Most cache invalidation incidents fall into a few recognizable categories.

Over-purging

This happens when one small publish action causes too much of the platform to refresh.

Typical symptoms include:

- high origin traffic after routine publishing

- degraded response times during editorial peaks

- increased infrastructure spend

- avoidable rebuild queues or cache refill storms

The root cause is often coarse dependency modeling. Teams know content relationships are complex, so they invalidate broadly to stay safe. That may protect freshness, but it weakens scalability.

Under-purging

This is the opposite problem: some affected outputs are never refreshed.

Typical symptoms include:

- stale pages despite successful publish messages

- inconsistencies across locales or channels

- pages updating while listings or metadata remain old

- editorial teams manually republishing content to force refresh

Under-purging usually comes from incomplete dependency graphs, hidden shared components, or assumptions that page-level refresh is enough.

Race conditions

Distributed publishing flows can produce sequencing problems.

Examples include:

- the CDN purges before regenerated content is available, causing temporary origin stress

- search updates happen before canonical pages are live

- two edits to the same content create competing refresh actions

- a related page refreshes before a shared fragment cache is invalidated

These are not always avoidable, but they can be reduced through ordered workflows, retries, short grace windows, and explicit handling of eventual consistency.

Preview leakage

Preview systems often use different tokens, caching rules, or unpublished content access paths. If preview and published traffic are not isolated carefully, unpublished content can be cached or exposed through shared layers.

This is why cache architecture must include environment and publication-state boundaries. An invalidation model that is correct for published content can still be unsafe for preview if cache keys are not separated properly.

Operational controls, telemetry, and rollback patterns

A cache invalidation architecture is only as good as the operating model around it.

Teams need visibility into whether refreshes actually happened, how long they took, and where failures accumulated.

Useful operational controls typically include:

- event logs with correlation IDs from CMS to frontend to CDN actions

- dashboards for publish-to-live latency

- counts of purged paths, tags, and failed refresh operations

- dead-letter or retry queues for failed invalidation jobs

- administrative tools for targeted manual replay

- environment-level kill switches for problematic automation

Publish-to-live latency is especially important. It captures the experience that editorial teams care about most: how long it takes for a valid change to appear consistently in production.

Rollback also deserves attention. Invalidation systems are usually designed around publishing forward, but enterprise teams also need controlled recovery when a bad release or content error occurs.

Useful rollback patterns can include:

- restoring previous content versions in the CMS

- replaying known-good generation jobs

- rehydrating a controlled cache state rather than clearing everything blindly

- temporarily widening TTLs or reducing purge scope during incidents

The goal is not just to refresh quickly. It is to recover predictably when something goes wrong.

A decision framework for choosing the right invalidation model

There is no universal best invalidation strategy across all enterprise headless platforms. The right model depends on content shape, delivery architecture, editorial expectations, and operational maturity.

A practical decision framework is to evaluate your platform across five dimensions.

1. Freshness expectation

Ask which content truly needs near-immediate propagation.

- breaking news, compliance updates, and pricing changes may need aggressive event-driven refresh

- lower-priority brand or campaign content may tolerate scheduled revalidation

If everything is treated as urgent, the system becomes expensive. If nothing is treated as urgent, trust erodes.

2. Dependency complexity

Ask how broadly content is reused.

- simple page ownership may support path-based invalidation

- shared modular content often benefits from tag- or dependency-based strategies

- highly aggregated experiences may require a mixed model

The more reuse exists, the less effective page-only invalidation becomes.

3. Runtime and rendering model

Ask where the actual cacheable artifacts live.

- mostly static sites may rely more on regeneration workflows

- hybrid rendering platforms may need route and data cache controls together

- edge-heavy architectures may push more invalidation logic closer to CDN behavior

Your invalidation design should mirror the rendering architecture rather than sit beside it as an afterthought. In practice, this is where Next.js development decisions around ISR, SSR, and revalidation mechanics become tightly coupled to publishing behavior.

4. Operational maturity

Ask what the team can reliably operate.

A sophisticated event-driven graph is not automatically better if the organization cannot observe, debug, and maintain it. Sometimes a slightly less optimized but more transparent model is the better enterprise choice.

5. Cost sensitivity

Ask where inefficiency hurts most.

- frequent full rebuilds can increase compute and delivery cost

- broad purges can create origin spikes

- overly granular invalidation can increase system complexity and support burden

The best architecture is usually the one that keeps freshness within business expectations while minimizing unnecessary refresh work.

A sensible progression for many enterprise teams is:

- Start by documenting dependency classes and freshness requirements.

- Move away from blanket full rebuilds where publishing latency is unacceptable.

- Introduce selective path refresh for directly owned routes.

- Add tag- or dependency-based invalidation where shared content makes path-only logic unreliable.

- Instrument publish-to-live telemetry before expanding complexity further.

That progression keeps the architecture grounded in operating reality.

Final perspective

Cache invalidation in headless systems should be treated as part of content delivery design, not as a post-launch optimization task.

When enterprise teams make it a first-class architectural concern, several things improve at once: editorial trust increases, publishing latency becomes more predictable, infrastructure waste decreases, and incident recovery gets easier.

The key is not to chase perfect instant freshness across every layer. The key is to define clear freshness policies, map content relationships carefully, and build event flows that refresh the right things with the right scope.

For most enterprise platforms, that leads to a hybrid model rather than a single technique. Some content deserves targeted immediate refresh. Some can rely on timed revalidation. Some dependencies need path awareness; others need tags or relationship-driven invalidation.

The teams that handle this well are usually the ones that stop asking, "How do we clear cache?" and start asking, "How should published content propagate through our platform?" That framing produces better architecture, better operations, and a much more trustworthy publishing experience.

Tags: Headless, Architecture, Caching, CMS, CDN, Next.js, Enterprise Platforms